Container Storage Interface简称CSI,即容器存储接口,CSI试图建立接口规范,旨在能为容器编排系统(COS)和存储系统间建立一套标准的存储调用接口,通过该接口能为容器编排系统提供存储服务。

此CSI独立于Kubernetes,是整个容器生态的标准存储接口,例如Kubernetes,Mesos,Docker,Cloud Foundry等。Kubernetes v1.9引入的CSI,v1.13 版本正式GA。

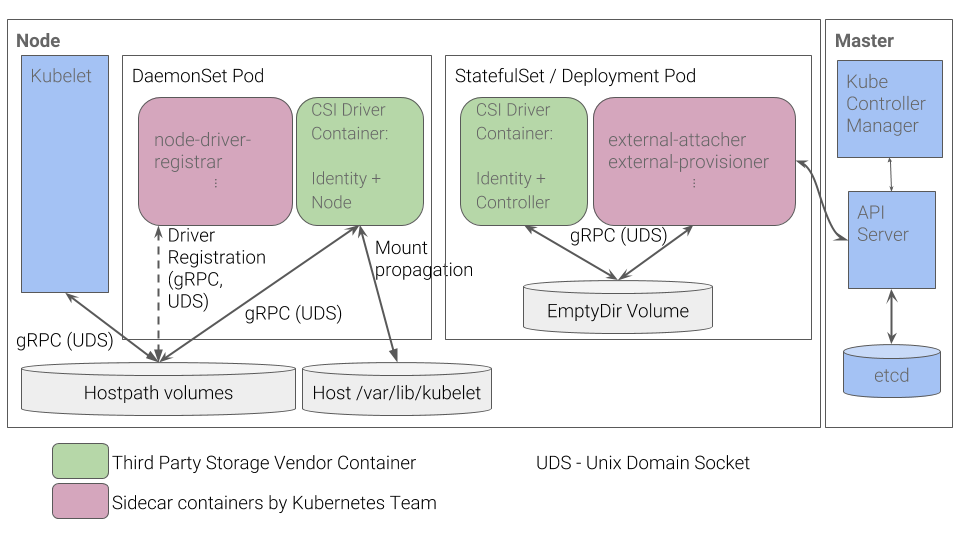

类似于CRI,CSI也是基于gRPC实现,但kube-controller-manager并不能直接调用CSI插件,而是Kubelet通过Kubelet插件注册机制发现CSI驱动程序,通过unix socket(套接字)直接向CSI驱动程序发出CSI调用(如NodeStageVolume、NodePublishVolume等),以挂载和卸载卷。

对于StatefulSet有状态控制集,Kubernetes提供的sidecar容器,其中External Attacher监听 VolumeAttachment和PersistentVolume对象的变化情况,并调用CSI插件的 ControllerPublishVolume和ControllerUnpublishVolume等API将Volume挂载或卸载到指定的 Node上;External Provisioner监听PersistentVolumeClaim对象的变化情况,并调用CSI插件的ControllerPublish和ControllerUnpublish等API管理Volume。

Kubernetes支持的存储卷请查看官方文档:https://kubernetes.io/docs/concepts/storage/#types-of-volumes

对于CSI而言,在Kubernetes中,RBD块存储是最稳定且最常用的存储类型,故存储以RBD开篇。

RBD块存储

RBD全称为RADOS Block Device,块存储本质就是将裸磁盘或类似裸磁盘(lvm)设备映射给主机使用,RBD块设备类似磁盘可以被挂载,主机可以对其进行格式化并存储和读取数据。RBD块设备具有快照、多副本、克隆和一致性等特性,块设备读取速度快但是不支持共享,所以RBD类型的PersistentVolumeClaim不能被多个Pod挂载。

Ceph

Ceph是一个统一的分布式存储系统,具有高扩展性、高性能、高可靠性等优点,同时提供块存储服务(rbd)、对象存储服务(rgw)以及文件系统存储服务(cephfs),目前也是OpenStack,Kubernetes的主流后端存储。

在Kubernetes中,可以使用Rook进行部署Ceph,Rook是一个开源的cloud-native,为各种存储解决方案提供平台,框架和支持,以便与云原生环境本地集成

在裸机中,可以使用ceph-deploy进行快速部署,但不支持REHL8系Linux操作系统。这里用的是CentOS8,需要手动安装Ceph集群

主机 操作系统 内核 数据盘

ceph01 CentOS Linux 8 5.4.38 20G

ceph02 CentOS Linux 8 5.4.38 20G

ceph03 CentOS Linux 8 5.4.38 20G

1、初始化环境

优化配置

cat > /etc/rc.d/init.d/ceph-init <<EOF

#!/bin/bash

# Provides:

# chkconfig: - 5 50

# description: ceph-init.

#设置文件描述符

ulimit -SHn 102400

#read_ahead, 通过数据预读并且记载到随机访问内存方式提高磁盘读操作

echo "8192" > /sys/block/vdb/queue/read_ahead_kb

EOF

chmod +x /etc/rc.d/init.d/ceph-init

cd /etc/rc.d/init.d

chkconfig --add ceph-init

chkconfig ceph-init on

cat >> /etc/security/limits.conf << EOF

* soft nofile 65535

* hard nofile 65535

EOF

I/O Scheduler,SSD要用noop,SATA/SAS使用deadline

echo "deadline" >/sys/block/sd[x]/queue/scheduler

echo "noop" >/sys/block/sd[x]/queue/scheduler

内核参数优化

cat > /etc/sysctl.d/ceph.conf << EOF

kernel.pid_max = 4194303

vm.swappiness = 0

net.core.rmem_default = 80331648

net.core.rmem_max = 80331648

net.core.wmem_default = 33554432

net.core.wmem_max = 50331648

vm.dirty_ratio = 10

vm.dirty_background_ratio = 5

EOF

sysctl -p

配置yum源

cat << EOF | tee /etc/yum.repos.d/ceph.repo

[Ceph]

name=Ceph packages for $basearch

baseurl=http://mirrors.163.com/ceph/rpm-octopus/el8/\$basearch

enabled=1

gpgcheck=1

type=rpm-md

gpgkey=https://download.ceph.com/keys/release.asc

priority=1

[Ceph-noarch]

name=Ceph noarch packages

baseurl=http://mirrors.163.com/ceph/rpm-octopus/el8/noarch

enabled=1

gpgcheck=1

type=rpm-md

gpgkey=https://download.ceph.com/keys/release.asc

priority=1

[ceph-source]

name=Ceph source packages

baseurl=http://mirrors.163.com/ceph/rpm-octopus/el8/SRPMS

enabled=1

gpgcheck=1

type=rpm-md

gpgkey=https://download.ceph.com/keys/release.asc

EOF

时钟同步

略

2、安装Ceph

三个节点安装最新ceph版本

yum install -y ceph-15.2.1 ceph-common-15.2.1 ceph-radosgw-15.2.1

Ceph版本说明

x.0.z - 开发版,x.1.z - 候选版,x.2.z - 稳定版

x代表大版本,以英文字母进行排序(A-Z),octopus版的o是第15个字母。

3、配置Ceph

[root@ceph-01 ~]#cd /etc/ceph

#生成fsid

[root@k8s-master01 ceph]# uuidgen

adaac7ee-d780-4b8d-9a87-5c827318bf9d

[root@k8s-master01 ceph]# vi ceph.conf

[global]

fsid = e6c1f101-00e7-48ff-8ac0-c5224ec6e78d

mon_initial_members = ceph01, ceph02, ceph03

mon_host = 172.31.250.207,172.31.250.208,172.31.250.209

auth_cluster_required = cephx

auth_service_required = cephx

auth_client_required = cephx

osd pool default size = 3

osd pool default min size = 1

public network = 172.31.250.0/20,192.168.0.0/16

cluster network = 172.31.250.0/20

max open files = 131072

[mon.a]

host = ceph01

mon addr = 172.31.250.207:6789

[mon.a]

host = ceph02

mon addr = 172.31.250.208:6789

[mon.a]

host = ceph03

mon addr = 172.31.250.209:6789

[mon]

mon allow pool delete = true

mon clock drift allowed = 1

mon osd min down reporters = 1

mon osd down out interval = 600

mgr initial modules = dashboard,prometheus

mon_data_avail_warn = 10

[osd]

osd crush update on start = true

osd journal size = 20000

osd max write size = 512

osd client message size cap = 2147483648

osd deep scrub stride = 131072

osd op threads = 16

osd disk threads = 4

osd map cache size = 1024

osd map cache bl size = 128

osd mount options xfs = "rw,noexec,nodev,noatime,nodiratime,nobarrier"

osd recovery op priority = 2

osd recovery max active = 10

osd max backfills = 4

osd min pg log entries = 30000

osd max pg log entries = 100000

osd mon heartbeat interval = 40

ms dispatch throttle bytes = 1048576000

objecter inflight ops = 819200

osd op log threshold = 50

osd crush chooseleaf type = 0

filestore xattr use omap = true

filestore min sync interval = 10

filestore max sync interval = 15

filestore queue max ops = 25000

filestore queue max bytes = 1048576000

filestore queue committing max ops = 50000

filestore queue committing max bytes = 10485760000

filestore split multiple = 8

filestore merge threshold = 40

filestore fd cache size = 1024

filestore op threads = 32

journal max write bytes = 1073714824

journal max write entries = 10000

journal queue max ops = 50000

journal queue max bytes = 10485760000

[client]

rbd cache = true

rbd cache size = 335544320

rbd cache max dirty = 134217728

rbd cache max dirty age = 30

rbd cache writethrough until flush = false

rbd cache max dirty object = 2

rbd cache target dirty = 235544320

4、创建mon

#为此集群创建密钥环、并生成mon密钥。

ceph-authtool --create-keyring /etc/ceph/ceph.mon.keyring --gen-key -n mon. --cap mon 'allow *'

#生成管理员密钥环,生成client.admin用户并将用户添加到密钥环。

ceph-authtool --create-keyring /etc/ceph/ceph.client.admin.keyring --gen-key -n client.admin --cap mon 'allow *' --cap osd 'allow *' --cap mds 'allow *' --cap mgr 'allow *'

#生成bootstrap-osd密钥环,生成client.bootstrap-osd用户并将用户添加到密钥环。

ceph-authtool --create-keyring /var/lib/ceph/bootstrap-osd/ceph.keyring --gen-key -n client.bootstrap-osd --cap mon 'profile bootstrap-osd' --cap mgr 'allow r'

#将生成的密钥添加到中ceph.mon.keyring。

ceph-authtool /etc/ceph/ceph.mon.keyring --import-keyring /etc/ceph/ceph.client.admin.keyring

ceph-authtool /etc/ceph/ceph.mon.keyring --import-keyring /var/lib/ceph/bootstrap-osd/ceph.keyring

#使用主机名,主机IP地址和FSID生成mon映射。另存为/etc/ceph/monmap:

monmaptool --create --add ceph01 172.31.250.207 --add ceph02 172.31.250.208 --add ceph03 172.31.250.209 --fsid e6c1f101-00e7-48ff-8ac0-c5224ec6e78d /etc/ceph/monmap

将ceph的配置同步到其他两台机,三台机都需要执行

#在mon主机上创建一个或多个默认数据目录。

sudo -u ceph mkdir /var/lib/ceph/mon/ceph-${hostname}

#将所有者更改为ceph.mon.keyring。

chown ceph:ceph -R /etc/ceph/

#用mon映射和密钥环填充监视器守护程序。

sudo -u ceph ceph-mon --mkfs -i ceph01 --monmap /etc/ceph/monmap --keyring /etc/ceph/ceph.mon.keyring

三台机都启动mon

[root@ceph01 ~]# systemctl start ceph-mon@ceph01

[root@ceph02 ~]# systemctl start ceph-mon@ceph02

[root@ceph03 ~]# systemctl start ceph-mon@ceph03

[root@ceph01 ceph]# ceph -s

cluster:

id: adaac7ee-d780-4b8d-9a87-5c827318bf9d

health: HEALTH_WARN

3 monitors have not enabled msgr2

services:

mon: 3 daemons, quorum ceph01,ceph02,ceph03 (age 64s)

mgr: no daemons active

osd: 0 osds: 0 up, 0 in

data:

pools: 0 pools, 0 pgs

objects: 0 objects, 0 B

usage: 0 B used, 0 B / 0 B avail

pgs:

# ceph03 做mgr 节点,在ceph03操作

[root@ceph03 ceph]# ceph mon enable-msgr2

#重启mon

[root@ceph03 ceph]# systemctl start ceph-mon@ceph03

#查看集群状态

[root@ceph01 ceph]# ceph -s

cluster:

id: adaac7ee-d780-4b8d-9a87-5c827318bf9d

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph01,ceph02,ceph03 (age 2m)

mgr: no daemons active

osd: 0 osds: 0 up, 0 in

data:

pools: 0 pools, 0 pgs

objects: 0 objects, 0 B

usage: 0 B used, 0 B / 0 B avail

pgs:

5、在ceph03创建mgr

ceph auth get-or-create mgr.ceph03 mon 'allow profile mgr' osd 'allow *' mds 'allow *'

sudo -u ceph mkdir /var/lib/ceph/mgr/ceph-ceph03

ceph auth get mgr.ceph03 -o /var/lib/ceph/mgr/ceph-ceph03/keyring

systemctl start ceph-mgr@ceph03

systemctl status ceph-mgr@ceph03

6、创建OSD

官方推荐BLUESTORE方式创建osd,BLUESTORE方式效率比FILESTORE方式效率更高,对SSD优化良好。

创建OSD

sudo ceph-volume lvm create --data /dev/vdb

#准备OSD

sudo ceph-volume lvm prepare --data /dev/vdb

#激活OSD

sudo ceph-volume lvm list

sudo ceph-volume lvm activate 2 ${osd fsid}

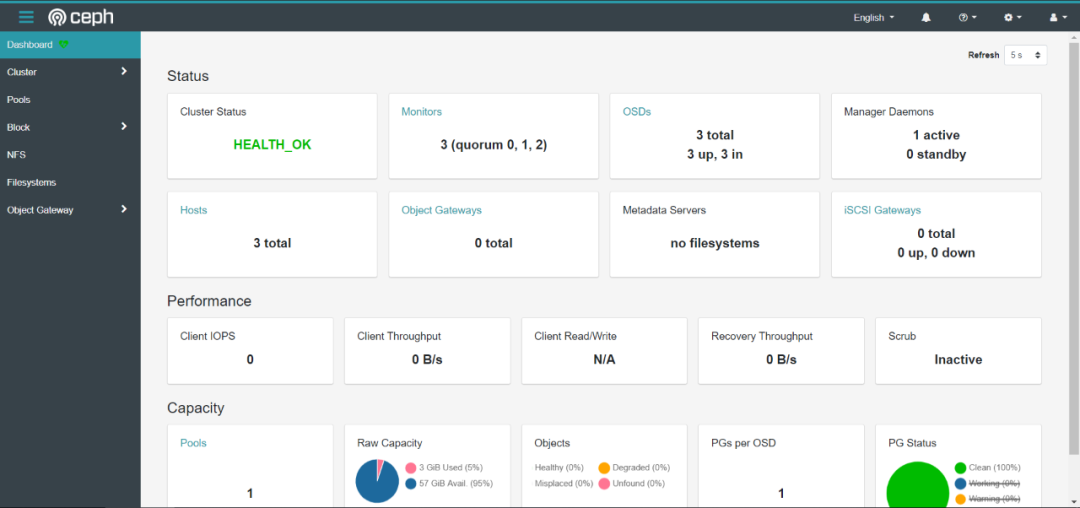

[root@ceph03 ~]# ceph -s

cluster:

id: e6c1f101-00e7-48ff-8ac0-c5224ec6e78d

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph01,ceph02,ceph03 (age 15m)

mgr: ceph03(active, since 14m)

osd: 3 osds: 3 up (since 100s), 3 in (since 100s)

data:

pools: 1 pools, 1 pgs

objects: 0 objects, 0 B

usage: 3.0 GiB used, 57 GiB / 60 GiB avail

pgs: 1 active+clean

7、开启mgr dashboard和prometheus模块

# 新版本的dashboard独立的安装包,默认没有安装

yum install -y ceph-mgr-dashboard

ceph dashboard create-self-signed-cert

ceph dashboard set-ssl-certificate -i dashboard.crt

ceph dashboard set-ssl-certificate-key -i dashboard.key

ceph dashboard set-ssl-certificate ceph03 -i dashboard.crt

ceph dashboard set-ssl-certificate-key ceph03 -i dashboard.key

ceph config set mgr mgr/dashboard/server_addr 172.31.250.209

ceph config set mgr mgr/dashboard/ssl_server_port 7443

#由于每个ceph-mgr主机都拥有自己的dashboard,也可以单独配置它们IP地址和端口:

$ ceph config set mgr mgr/dashboard/$name/server_addr $IP

$ ceph config set mgr mgr/dashboard/$name/server_port $PORT

$ ceph config set mgr mgr/dashboard/$name/ssl_server_port $PORT

#初始化用户

ceph dashboard ac-user-create ceph Ceph1234 administrator

#重新加载

ceph mgr module disable dashboard

ceph mgr module enable dashboard

#prometheus

ceph config set mgr mgr/prometheus/server_addr 172.31.250.209

ceph mgr module disable prometheus

ceph mgr module enable prometheus

#查看

[root@ceph01 ~]# ceph mgr services

{

"dashboard": "https://172.31.250.209:7443/",

"prometheus": "http://172.31.250.209:9283/"

}

[root@ceph01 ~]#

浏览器打开,也可以接入prometheus监控系统。

为Kubernetes提供存储服务

1. 为kubernetes创建一个资源池,并初始化

ceph osd pool create rbd 128

rbd pool init rbd

2. 创建用户

ceph auth add client.k8s mon 'allow rx' osd 'allow rwx pool=rbd'

# k8s用户只能对k8s这个存储池有读写权限,通过ceph auth list 查看

client.k8s

key: AQDHu69engcWBRAAn9LJ4M8IAsh96dO/8MC7Xw==

caps: [mon] allow rx

caps: [osd] allow rwx pool=rbd

3. 为ceph添加kubernetes secret

echo "$(ceph auth get-key client.k8s)"|base64

QVFESHU2OWVuZ2NXQlJBQW45TEo0TThJQXNoOTZkTy84TUM3WHc9PQo=

4. 创建StorageClass动态存储

[root@ceph01 ~]# vi storageclass-ceph.yaml

apiVersion: v1

kind: Secret

metadata:

name: ceph-secret-k8s

type: "kubernetes.io/rbd"

data:

key: QVFESHU2OWVuZ2NXQlJBQW45TEo0TThJQXNoOTZkTy84TUM3WHc9PQo=

---

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: ceph-storage

provisioner: kubernetes.io/rbd

allowVolumeExpansion: true

parameters:

monitors: 172.31.250.207:6789,172.31.250.208:6789,172.31.250.209:6789

adminId: k8s

adminSecretName: ceph-secret-k8s

pool: rbd

userId: k8s

userSecretName: ceph-secret-k8s

[root@ceph01 ~]# kubectl apply -f storageclass-ceph.yaml

5. 创建一个PVC测试

[root@ceph01 ~]# vi cept-test-pvc.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: ceph-test

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 1Gi

storageClassName: ceph-storage

[root@ceph01 ~]# kubectl apply -f cept-test-pvc.yaml

6.验证

[root@ceph01 ~]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

ceph-test Bound pvc-8d3e1368-97db-45bc-8cef-81302d94b6c1 1Gi RWO ceph-storage 68s

[root@ceph01 ~]# rbd list -p rbd

kubernetes-dynamic-pvc-34307dcc-ef5d-4a37-b716-fb8bd2f90e2b

[root@ceph01 ~]#

7.创建测试的StatefulSet控制集

[root@ceph01 ~]# vi busybox.yaml

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: busybox

spec:

selector:

matchLabels:

app: busybox # has to match .spec.template.metadata.labels

serviceName: "busybox"

replicas: 2 # by default is 1

template:

metadata:

labels:

app: busybox # has to match .spec.selector.matchLabels

spec:

terminationGracePeriodSeconds: 10

containers:

- name: busybox

image: busybox:1.31

imagePullPolicy: IfNotPresent

command:

- sh

- -c

- while(true);do date ;sleep 3s ;done

volumeMounts:

- name: data

mountPath: /data

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: [ "ReadWriteOnce" ]

storageClassName: "ceph-storage"

resources:

requests:

storage: 10Mi

[root@ceph01 ~]# kubectl create -f busybox.yaml

[root@ceph01 ~]# kubectl get po

NAME READY STATUS RESTARTS AGE

busybox-0 1/1 Running 0 24s

busybox-1 0/1 ContainerCreating 0 7s

[root@ceph01 ~]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

ceph-test Bound pvc-8d3e1368-97db-45bc-8cef-81302d94b6c1 1Gi RWO ceph-storage 27m

data-busybox-0 Bound pvc-20a47ccc-fe40-472e-b14e-05fa3540e000 10Mi RWO ceph-storage 29s

data-busybox-1 Bound pvc-dfb3dc71-bd0b-42d3-8df1-ea890378a7ef 10Mi RWO ceph-storage 12s

[root@ceph01 ~]# kubectl exec -it busybox-0 -- sh

/ # cd /data/

/data # echo 1111 >111.log

/data # df -h | grep /data

/dev/rbd0 8.7M 173.0K 8.3M 2% /data

往期回顾