在本篇技术文章中,我将分享在Kubernetes集群中进行配置和初始化的完整过程。这个过程涵盖了从配置主机到k8s集群各组件安装和集群的初始化和网络插件的安装以及mogdb的部署。

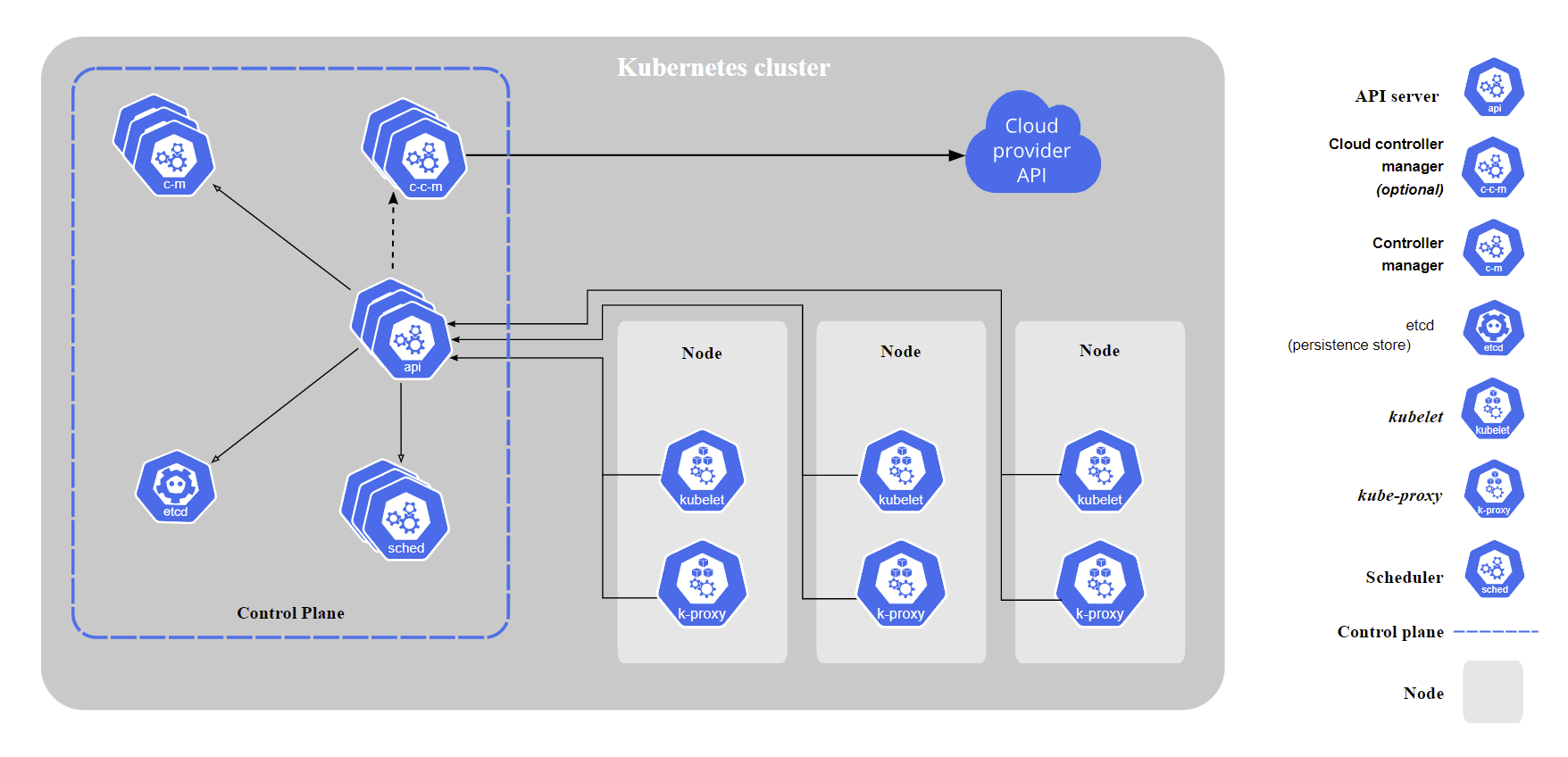

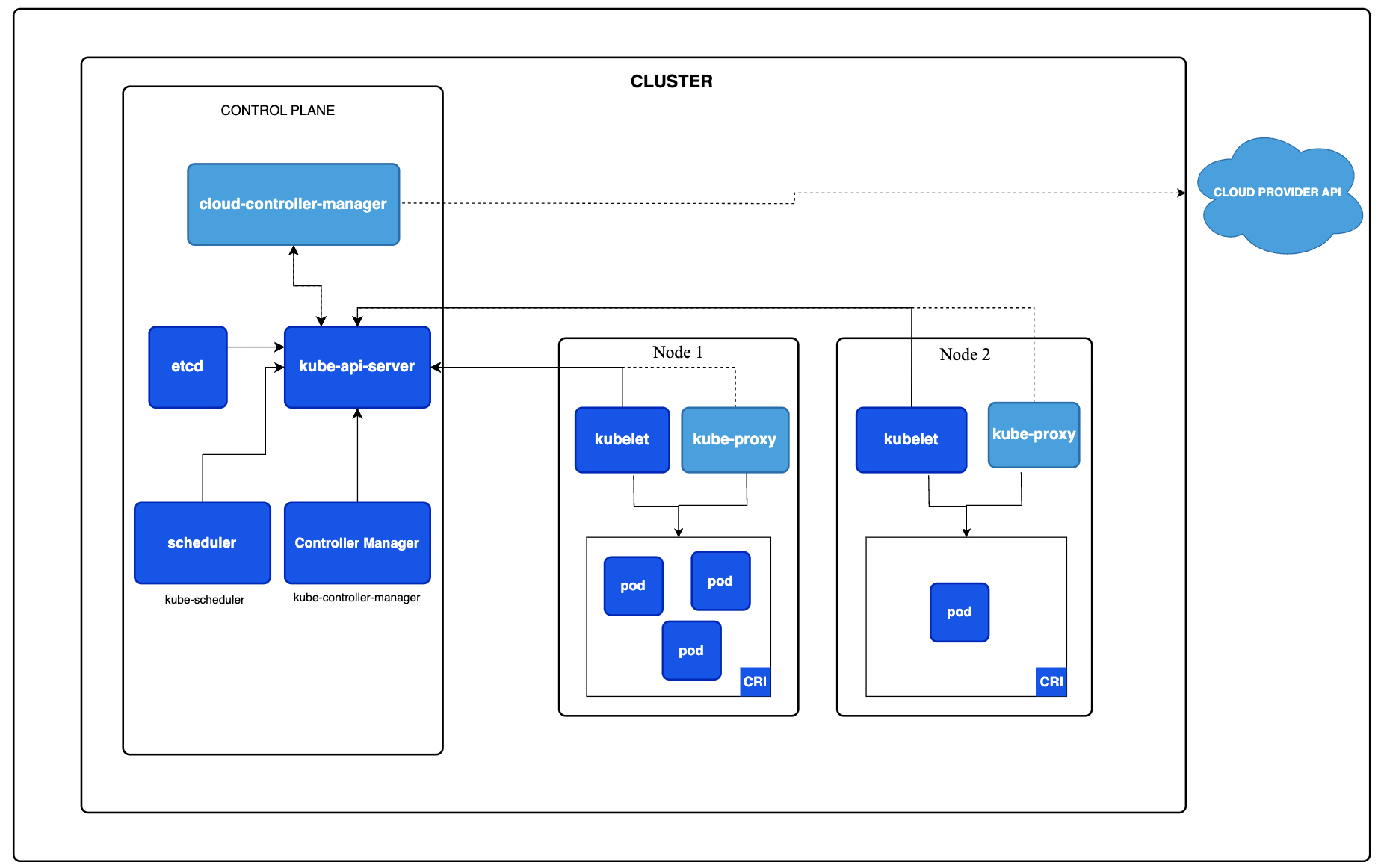

下图是从官方Kubernetes文档:https://kubernetes.io/docs/concepts/overview/components/

中提取的一个架构示意图。

它主要从云服务提供商的视角展示了Kubernetes集群的基本架构。图中详细描绘了云服务API与Kubernetes集群中的控制平面(Control Plane和工作节点(Node之间的关系。值得注意的是,这个架构图专门省略了一些第三方实现的组件,如容器运行时接口(CRI)、容器网络接口(CNI)和容器存储接口(CSI)

● kube-apiserver:扮演着至关重要的角色,它对外暴露了Kubernetes API并作为整个系统的前端控制层。这个组件被设计成可水平扩展的,即可以通过增加更多实例来提升其处理能力。

● etcd:作为Kubernetes集群的后端存储,负责存储集群的配置信息和各种资源状态。对Kubernetes集群的 etcd数据进行持续备份至关重要,以确保数据的安全和一致性。etcd在数据变更时能迅速通知集群中的相关组件。

●kube-scheduler:负责新创建的Pod的调度。当集群中出现尚未分配给任何节点的新Pod时,kube-scheduler 会基于节点的当前负载、应用的高可用性、性能要求和数据亲和性等因素来决定最合适的节点。

●kube-controller-manager:运行各种控制器,处理集群中的常规任务。为了减少复杂性,虽然逻辑上每个控制器是独立的进程,但它们都被编译成一个单独的可执行文件,并在同一个进程中运行。这些控制器包括节点控制器(Node Controller)、副本控制器(Replication Controller)、端点控制器(Endpoints Controller)、服务账户和令牌控制器(Service Account & Token Controllers)等

Kubernetes 中的三大关键插件分别负责运行时、网络和存储管理,它们是容器运行时接口(Container Runtime Interface, CRI)、容器网络接口(Container Network Interface, CNI)和容器存储接口(Container Storage Interface, CSI)。需要注意的是,CRI和CNI 是每个Kubernetes集群必需部署的基础组件,而CSI 则根据需求而定,通常在运行有状态服务时才会用到。

一、环境准备

此次用到的是一主两从的Kubernetes高可用架构

1、配置主机文件

[root@master01 ~]# vi /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

10.211.55.20 master01

10.211.55.21 node01

10.211.55.22 node02

10.211.55.20 master-lb # 如果不是高可用集群,该IP为Master01的IP

2、CentOS7安装yum源:

curl -o /etc/yum.repos.d/CentOS-Base.repo https://mirrors.aliyun.com/repo/Centos-7.repo

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

3、设置Kubernetes yum源:

cat <<EOF > /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ enabled=1 gpgcheck=1 repo_gpgcheck=1 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF

移除某些yum源:

sed -i -e '/mirrors.cloud.aliyuncs.com/d' -e '/mirrors.aliyuncs.com/d' /etc/yum.repos.d/CentOS-Base.repo

4、必备工具安装

yum install wget jq psmisc vim net-tools telnet yum-utils device-mapper-persistent-data lvm2 git -y

5、关闭不需要的功能

所有节点关闭防火墙、selinux、dnsmasq、swap。

systemctl disable --now firewalld

systemctl disable --now dnsmasq

systemctl disable --now NetworkManager

setenforce 0

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinux

sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

关闭swap分区

swapoff -a && sysctl -w vm.swappiness=0

sed -ri '/^[^#]*swap/s@^@#@' /etc/fstab

6、服务器同步时间

所有节点安装安装ntpdate

rpm -ivh http://mirrors.wlnmp.com/centos/wlnmp-release-centos.noarch.rpm

yum install ntpdate -y

同步时间

ln -sf /usr/share/zoneinfo/Asia/Shanghai /etc/localtime echo 'Asia/Shanghai' >/etc/timezone ntpdate time2.aliyun.com 将时间同步加入到crontab: */5 * * * * /usr/sbin/ntpdate time2.aliyun.com

7、配置系统限制

#所有节点执行

ulimit -SHn 65535

vim /etc/security/limits.conf

# 末尾添加如下内容

* soft nofile 655360

* hard nofile 131072

* soft nproc 655350

* hard nproc 655350

* soft memlock unlimited

* hard memlock unlimited

8、配置SSH免密钥登录

Master01节点免密钥登录其他节点,安装过程中生成配置文件和证书均在Master01上操作,集群管理也在Master01上操作,阿里云或者AWS上需要单独一台kubectl服务器。密钥配置如下:

ssh-keygen -t rsa

for i in master01 node01 node02;do ssh-copy-id -i .ssh/id_rsa.pub $i;done

9、下载安装源码文件

cd /root/ ; git clone https://github.com/dotbalo/k8s-ha-install.git

10、系统升级

#CentOS7需要升级,CentOS8可以按需升级系统

yum update -y --exclude=kernel* && reboot

内核升级

CentOS7 需要升级内核至4.18+,本地升级的版本为4.19

cd /root

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-devel-4.19.12-1.el7.elrepo.x86_64.rpm

wget http://193.49.22.109/elrepo/kernel/el7/x86_64/RPMS/kernel-ml-4.19.12-1.el7.elrepo.x86_64.rpm

所有节点安装内核

cd /root && yum localinstall -y kernel-ml*

所有节点更改内核启动顺序

grub2-set-default 0 && grub2-mkconfig -o /etc/grub2.cfg

grubby --args="user_namespace.enable=1" --update-kernel="$(grubby --default-kernel)"

检查默认内核是不是4.19

grubby --default-kernel

/boot/vmlinuz-4.19.12-1.el7.elrepo.x86_64

所有节点重启,然后检查内核是不是4.19

reboot

uname -a

Linux node02 4.19.12-1.el7.elrepo.x86_64 #1 SMP Fri Dec 21 11:06:36 EST 2018 x86_64 x86_64 x86_64 GNU/Linux

11、安装ipvsadm

所有节点安装ipvsadm:

yum install ipvsadm ipset sysstat conntrack libseccomp -y

所有节点配置ipvs模块,在内核4.19+版本nf_conntrack_ipv4已经改为nf_conntrack, 4.18以下使用nf_conntrack_ipv4即可:

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack

配置ipvs.conf

vim /etc/modules-load.d/ipvs.conf

# 加入以下内容

ip_vs

ip_vs_lc

ip_vs_wlc

ip_vs_rr

ip_vs_wrr

ip_vs_lblc

ip_vs_lblcr

ip_vs_dh

ip_vs_sh

ip_vs_fo

ip_vs_nq

ip_vs_sed

ip_vs_ftp

ip_vs_sh

nf_conntrack

ip_tables

ip_set

xt_set

ipt_set

ipt_rpfilter

ipt_REJECT

ipip

服务生效

systemctl enable --now systemd-modules-load.service

12、配置Kubernetes集群所需的内核参数

开启一些k8s集群中必须的内核参数,所有节点配置k8s内核:

cat <<EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

fs.may_detach_mounts = 1

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_watches=89100

fs.file-max=52706963

fs.nr_open=52706963

net.netfilter.nf_conntrack_max=2310720

net.ipv4.tcp_keepalive_time = 600

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_keepalive_intvl =15

net.ipv4.tcp_max_tw_buckets = 36000

net.ipv4.tcp_tw_reuse = 1

net.ipv4.tcp_max_orphans = 327680

net.ipv4.tcp_orphan_retries = 3

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.ip_conntrack_max = 65536

net.ipv4.tcp_max_syn_backlog = 16384

net.ipv4.tcp_timestamps = 0

net.core.somaxconn = 16384

EOF

sysctl --system

重启服务

所有节点配置完内核后,重启服务器,保证重启后内核依旧加载

reboot

lsmod | grep --color=auto -e ip_vs -e nf_conntrack

二、Kubernetes基本组件安装

本节主要安装的是集群中用到的各种组件,比如Docker-ce、Kubernetes各组件等。

1、安装docker

所有节点安装Docker-ce 19.03

yum install docker-ce-19.03.* -y

由于新版kubelet建议使用systemd,所以可以把docker的CgroupDriver改成systemd

mkdir /etc/docker cat > /etc/docker/daemon.json <<EOF { "exec-opts": ["native.cgroupdriver=systemd"] } EOF

所有节点设置开机自启动Docker:

systemctl daemon-reload && systemctl enable --now docker

2、安装Kubernetes组件

yum list kubeadm.x86_64 --showduplicates | sort -r

所有节点安装1.22.9版本kubeadm:

yum install kubelet-1.22.9 kubeadm-1.22.9 kubectl-1.22.9

默认配置的pause镜像使用gcr.io仓库,国内可能无法访问,所以这里配置Kubelet使用阿里云的pause镜像:

cat >/etc/sysconfig/kubelet<<EOF

KUBELET_EXTRA_ARGS="--cgroup-driver=systemd --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.2"

EOF

设置Kubelet开机自启动:

systemctl daemon-reload

systemctl enable --now kubelet

三、初始化Kubernetes集群

官方初始化文档:https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/high-availability/

1、创建kubeadm-config.yaml配置文件:

Master01节点配置

vi kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

kind: InitConfiguration

bootstrapTokens:

- token: "7t2weq.bjbawausm0jaxury"

ttl: "24h0m0s"

usages:

- signing

- authentication

groups:

- system:bootstrappers:kubeadm:default-node-token

localAPIEndpoint:

advertiseAddress: "10.211.55.20"

bindPort: 6443

nodeRegistration:

criSocket: "/var/run/dockershim.sock"

name: master01

taints:

- key: "node-role.kubernetes.io/master"

effect: NoSchedule

---

apiVersion: kubeadm.k8s.io/v1beta2

kind: ClusterConfiguration

certificatesDir: "/etc/kubernetes/pki"

clusterName: "kubernetes"

controlPlaneEndpoint: "10.211.55.20:16443"

apiServer:

certSANs:

- "10.211.55.20"

timeoutForControlPlane: "4m0s"

dns:

type: "CoreDNS"

etcd:

local:

dataDir: "/var/lib/etcd"

imageRepository: "registry.cn-hangzhou.aliyuncs.com/google_containers"

kubernetesVersion: "v1.22.9"

networking:

dnsDomain: "cluster.local"

podSubnet: "172.168.0.0/12"

serviceSubnet: "10.96.0.0/12"

controllerManager: {}

scheduler: {}

2、更新kubeadm文件

kubeadm config migrate --old-config kubeadm-config.yaml --new-config new.yaml

3、下载镜像

kubeadm config images pull --config /root/new.yaml

#输出结果

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.22.9

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.22.9

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.22.9

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.22.9

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.5

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.0-0

[config/images] Pulled registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.4

4、开机自启动kubelet

systemctl enable --now kubelet(如果启动失败无需管理,初始化成功以后即可启动)

5、Master01节点初始化

初始化以后会在/etc/kubernetes目录下生成对应的证书和配置文件

kubeadm init --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.22.0 --apiserver-advertise-address 10.211.55.20 --pod-network-cidr=10.244.0.0/16 --token-ttl 0

输出结果

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 10.211.55.20:6443 --token 7ebdnw.ng2lgqxs51i1s9y4 \

--discovery-token-ca-cert-hash sha256:becf736ad9e638815e88122835ccf4d6ad1dcfa8088f6ff4c7e25a49d680baba

#输出结果

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 10.211.55.20:6443 --token 7ebdnw.ng2lgqxs51i1s9y4 \

--discovery-token-ca-cert-hash sha256:becf736ad9e638815e88122835ccf4d6ad1dcfa8088f6ff4c7e25a49d680baba

#################sql需要记下来,后面需要用到###########################

6、创建kube

根据上面的日志提示创建kube

mkdir /root/.kube sudo cp -i /etc/kubernetes/admin.conf /root/.kube/config chown root:root /root/.kube/ export KUBECONFIG=/etc/kubernetes/admin.conf

7、查看状态

kubectl get nodes

#输出结果

NAME STATUS ROLES AGE VERSION

master01 NotReady control-plane,master 9m51s v1.22.9

采用初始化安装方式,所有的系统组件均以容器的方式运行并且在kube-system命名空间内,此时可以查看Pod状态:

kubectl get pods -n kube-system -o wide

#输出结果

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

coredns-7f6cbbb7b8-dc2z2 0/1 Pending 0 10m <none> <none> <none> <none>

coredns-7f6cbbb7b8-n7h2t 0/1 Pending 0 10m <none> <none> <none> <none>

etcd-master01 1/1 Running 0 10m 10.211.55.20 master01 <none> <none>

kube-apiserver-master01 1/1 Running 0 10m 10.211.55.20 master01 <none> <none>

kube-controller-manager-master01 1/1 Running 0 10m 10.211.55.20 master01 <none> <none>

kube-proxy-r66z2 1/1 Running 0 10m 10.211.55.20 master01 <none> <none>

kube-scheduler-master01 1/1 Running 0 10m 10.211.55.20 master01 <none> <none>

8、Node节点的配置

Node节点上主要部署公司的一些业务应用,生产环境中不建议Master节点部署系统组件之外的其他Pod,测试环境可以允许Master节点部署Pod以节省系统资源。

node1、node2执行

kubeadm join 10.211.55.20:6443 --token 7ebdnw.ng2lgqxs51i1s9y4 \

--discovery-token-ca-cert-hash sha256:becf736ad9e638815e88122835ccf4d6ad1dcfa8088f6ff4c7e25a49d680baba

#输出结果

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

查看node状态

kubectl get nodes

#输出结果

NAME STATUS ROLES AGE VERSION

master01 NotReady control-plane,master 13m v1.22.9

node01 NotReady <none> 87s v1.22.9

node02 NotReady <none> 33s v1.22.9

查看pod状态

kubectl get pod -n kube-system

#输出结果

NAME READY STATUS RESTARTS AGE

coredns-7f6cbbb7b8-dc2z2 0/1 Pending 0 65m

coredns-7f6cbbb7b8-n7h2t 0/1 Pending 0 65m

etcd-master01 1/1 Running 0 65m

kube-apiserver-master01 1/1 Running 0 65m

kube-controller-manager-master01 1/1 Running 0 65m

kube-proxy-8wfkb 1/1 Running 0 52m

kube-proxy-cqj9c 1/1 Running 0 53m

kube-proxy-r66z2 1/1 Running 0 65m

kube-scheduler-master01 1/1 Running 0 65m

两个Pending需要删除calico

删除calico

cd k8s-ha-install/

cd calico/

kubectl delete -f calico-etcd.yaml

#输出结果

Error from server (NotFound): error when deleting "calico-etcd.yaml": secrets "calico-etcd-secrets" not found

Error from server (NotFound): error when deleting "calico-etcd.yaml": configmaps "calico-config" not found

Error from server (NotFound): error when deleting "calico-etcd.yaml": clusterroles.rbac.authorization.k8s.io "calico-kube-controllers" not found

Error from server (NotFound): error when deleting "calico-etcd.yaml": clusterrolebindings.rbac.authorization.k8s.io "calico-kube-controllers" not found

Error from server (NotFound): error when deleting "calico-etcd.yaml": clusterroles.rbac.authorization.k8s.io "calico-node" not found

Error from server (NotFound): error when deleting "calico-etcd.yaml": clusterrolebindings.rbac.authorization.k8s.io "calico-node" not found

Error from server (NotFound): error when deleting "calico-etcd.yaml": daemonsets.apps "calico-node" not found

Error from server (NotFound): error when deleting "calico-etcd.yaml": serviceaccounts "calico-node" not found

Error from server (NotFound): error when deleting "calico-etcd.yaml": deployments.apps "calico-kube-controllers" not found

Error from server (NotFound): error when deleting "calico-etcd.yaml": serviceaccounts "calico-kube-controllers" not found

现在状态都是Running,状态正常

kubectl get pod -n kube-system

#输出结果

NAME READY STATUS RESTARTS AGE

coredns-7f6cbbb7b8-dc2z2 1/1 Running 0 66m

coredns-7f6cbbb7b8-n7h2t 1/1 Running 0 66m

etcd-master01 1/1 Running 0 66m

kube-apiserver-master01 1/1 Running 0 66m

kube-controller-manager-master01 1/1 Running 0 66m

kube-proxy-8wfkb 1/1 Running 0 53m

kube-proxy-cqj9c 1/1 Running 0 54m

kube-proxy-r66z2 1/1 Running 0 66m

kube-scheduler-master01 1/1 Running 0 66m

kubectl get nodes

#输出结果

NAME STATUS ROLES AGE VERSION

master01 Ready control-plane,master 66m v1.22.9

node01 Ready <none> 54m v1.22.9

node02 Ready <none> 53m v1.22.9

8、安装flannel

下载flannel

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

#输出结果

--2023-12-04 16:17:02-- https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

Resolving raw.githubusercontent.com (raw.githubusercontent.com)... 185.199.110.133, 185.199.111.133, 185.199.108.133, ...

Connecting to raw.githubusercontent.com (raw.githubusercontent.com)|185.199.110.133|:443... connected.

HTTP request sent, awaiting response... 200 OK

Length: 4398 (4.3K) [text/plain]

Saving to: ‘kube-flannel.yml’

100%[=========================================================================================================>] 4,398 --.-K/s in 0s

2023-12-04 16:17:02 (52.4 MB/s) - ‘kube-flannel.yml’ saved [4398/4398]

安装flannel

cd /root/k8s-ha-install/kube-flannel.yml

kubectl apply -f kube-flannel.yml

#输出结果

namespace/kube-flannel created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

kubectl get pod -n kube-flannel

#输出结果

NAME READY STATUS RESTARTS AGE

kube-flannel-ds-p9dp5 1/1 Running 0 125m

kube-flannel-ds-rx4h5 1/1 Running 0 125m

kube-flannel-ds-xpvl6 1/1 Running 0 125m

查看flannel状态

kubectl get pod -n kube-flannel -o wide

#输出结果

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube-flannel-ds-p9dp5 1/1 Running 0 3h48m 10.211.55.22 node02 <none> <none>

kube-flannel-ds-rx4h5 1/1 Running 0 3h48m 10.211.55.21 node01 <none> <none>

kube-flannel-ds-xpvl6 1/1 Running 0 3h48m 10.211.55.20 master01 <none> <none>

ip route list | grep flannel

#输出结果

10.244.1.0/24 via 10.244.1.0 dev flannel.1 onlink

10.244.2.0/24 via 10.244.2.0 dev flannel.1 onlink

cat /run/flannel/subnet.env

#输出结果

FLANNEL_NETWORK=10.244.0.0/16

FLANNEL_SUBNET=10.244.0.1/24

FLANNEL_MTU=1450

FLANNEL_IPMASQ=true

for i in {20..22};do ssh root@10.211.55.$i ifconfig | grep -A1 flannel ;done

#输出结果

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 10.244.0.0 netmask 255.255.255.255 broadcast 0.0.0.0

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 10.244.1.0 netmask 255.255.255.255 broadcast 0.0.0.0

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 10.244.2.0 netmask 255.255.255.255 broadcast 0.0.0.0

四、部署Mogdb

1、配置0-pv.yaml

apiVersion: v1

kind: PersistentVolume

metadata:

name: mogdb-pv0

labels:

app: mogdb

spec:

capacity:

storage: 2Gi

accessModes: ["ReadWriteOnce"]

persistentVolumeReclaimPolicy: Retain

storageClassName: local

local:

path: /mogdb-data/0 # 确保提前创建,并且为空

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

#- k8s01-master

- kind-worker # 替换为实际的物理机的node,通过kubectl get node查看

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: mogdb-pv1

labels:

app: mogdb

spec:

capacity:

storage: 2Gi

accessModes: ["ReadWriteOnce"]

persistentVolumeReclaimPolicy: Retain

storageClassName: local

local:

path: /mogdb-data/1 # 确保提前创建,并且为空

nodeAffinity:

required:

nodeSelectorTerms:

- matchExpressions:

- key: kubernetes.io/hostname

operator: In

values:

#- k8s01-slave-01

- kind-worker2 # 替换为实际的物理机的node,通过kubectl get node查看

2、配置1-statefulset.yaml

apiVersion: v1

kind: Service

metadata:

name: mogdb

labels:

app: mogdb

app.kubernetes.io/name: mogdb

spec:

ports:

- name: mogdb

port: 5432

clusterIP: None

selector:

app: mogdb

---

apiVersion: v1

kind: Service

metadata:

name: mogdb-read

labels:

app: mogdb

app.kubernetes.io/name: mogdb

readonly: "true"

spec:

ports:

- name: mogdb

port: 5432

selector:

app: mogdb

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: mogdb

spec:

selector:

matchLabels:

app: mogdb

app.kubernetes.io/name: mogdb

serviceName: mogdb

replicas: 2

template:

metadata:

labels:

app: mogdb

app.kubernetes.io/name: mogdb

spec:

shareProcessNamespace: true

containers:

- name: mogdb

image: swr.cn-north-4.myhuaweicloud.com/mogdb/mogdb:5.0.0

command:

- bash

- "-c"

- |

set -ex

MogDB_Role=

REPL_CONN_INFO=

cat >>/home/omm/.profile <<-EOF

export OG_SUBNET="0.0.0.0/0"

export PGHOST="/var/lib/mogdb/tmp"

EOF

[[ -d "$PGHOST" ]] || (mkdir -p $PGHOST && chown omm $PGHOST)

hostname=`hostname`

[[ "$hostname" =~ -([0-9]+)$ ]] || exit 1

ordinal=${BASH_REMATCH[1]}

if [[ $ordinal -eq 0 ]];then

MogDB_Role="primary"

else

MogDB_Role="standby"

echo "$hostname $PodIP" |ncat --send-only mogdb-0.mogdb 6543

remote_ip=`ping mogdb-0.mogdb -c 1 | sed '1{s/[^(]*(//;s/).*//;q}'`

REPL_CONN_INFO="replconninfo${ordinal} = 'localhost=$PodIP localport=5432 localservice=5434 remotehost=$remote_ip remoteport=5432 remoteservice=5434'"

fi

[[ -n "$REPL_CONN_INFO" ]] && export REPL_CONN_INFO

source /home/omm/.profile

exec bash /entrypoint.sh -M "$MogDB_Role"

env:

- name: PGHOST

value: /var/lib/mogdb/tmp

- name: PodIP

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: status.podIP

ports:

- name: mogdb

containerPort: 5432

volumeMounts:

- name: data

mountPath: /var/lib/mogdb

subPath: mogdb

resources:

requests:

cpu: 500m

memory: 1Gi

livenessProbe:

exec:

command:

- sh

- -c

- su -l omm -c "gsql -dpostgres -c 'select 1'"

initialDelaySeconds: 120

periodSeconds: 10

timeoutSeconds: 5

failureThreshold: 12

readinessProbe:

exec:

# Check we can execute queries over TCP (skip-networking is off).

command:

- sh

- -c

- su -l omm -c "gsql -dpostgres -c 'select 1'"

initialDelaySeconds: 30

periodSeconds: 2

timeoutSeconds: 1

- name: sidecar

image: swr.cn-north-4.myhuaweicloud.com/mogdb/mogdb:5.0.0

ports:

- name: sidecar

containerPort: 6543

command:

- bash

- "-c"

- |

set -ex

cat >>/home/omm/.profile <<-EOF

export PGHOST="/var/lib/mogdb/tmp"

EOF

source /home/omm/.profile

while true;do

ncat -l 6543 >/tmp/remote.info

read host_name remote_ip < /tmp/remote.info

[[ "$host_name" =~ -([0-9]+)$ ]] || exit 1

remote_ordinal=${BASH_REMATCH[1]}

repl_conn_info="replconninfo${remote_ordinal} = 'localhost=$PodIP localport=5432 localservice=5434 remotehost=$remote_ip remoteport=5432 remoteservice=5434'"

echo "$repl_conn_info" >> "${PGDATA}/postgresql.conf"

su - omm -c "gs_ctl reload"

done

env:

- name: PodIP

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: status.podIP

- name: PGDATA

value: "/var/lib/mogdb/data"

- name: PGHOST

value: "/var/lib/mogdb/tmp"

volumeMounts:

- name: data

mountPath: /var/lib/mogdb

subPath: mogdb

resources:

requests:

cpu: 500m

memory: 1Gi

volumeClaimTemplates:

- metadata:

name: data

spec:

accessModes: ["ReadWriteOnce"]

storageClassName: "local"

resources:

requests:

storage: 2Gi

3、部署mogdb

kubectl apply -f 0-pv.yaml

#输出结果

persistentvolume/mogdb-pv0 created

persistentvolume/mogdb-pv1 created

kubectl apply -f 1-statefulset.yaml

#输出结果

service/mogdb created

service/mogdb-read created

statefulset.apps/mogdb created

kubectl exec -it mogdb -- bash

root@mogdb:/# su - omm

omm@mogdb:~$ gsql -d postgres

gsql ((MogDB x.x.x build 56189e20) compiled at 2022-01-07 18:47:53 commit 0 last mr )

Non-SSL connection (SSL connection is recommended when requiring high-security)

Type "help" for help.

MogDB=#

通过以上步骤,我们成功地配置并初始化了一个Kubernetes集群,从基本的系统设置到集群的部署与网络配置,每一步都至关重要。希望本教程对您在构建和管理Kubernetes集群的旅程中有所帮助。

随着Kubernetes在云原生生态系统中的日益普及,将MogDB部署在Kubernetes平台上的场景越来越多。这种趋势不仅彰显了Kubernetes在云基础设施管理中的重要性,也突显了MogDB作为一种灵活、高效的数据库解决方案在云原生环境中的适应性和日益增长的需求。