原标题:Interpretable Explanations of Black Boxes by Meaningful Perturbation

作者:Ruth C. Fong, Andrea Vedaldi

中文摘要:

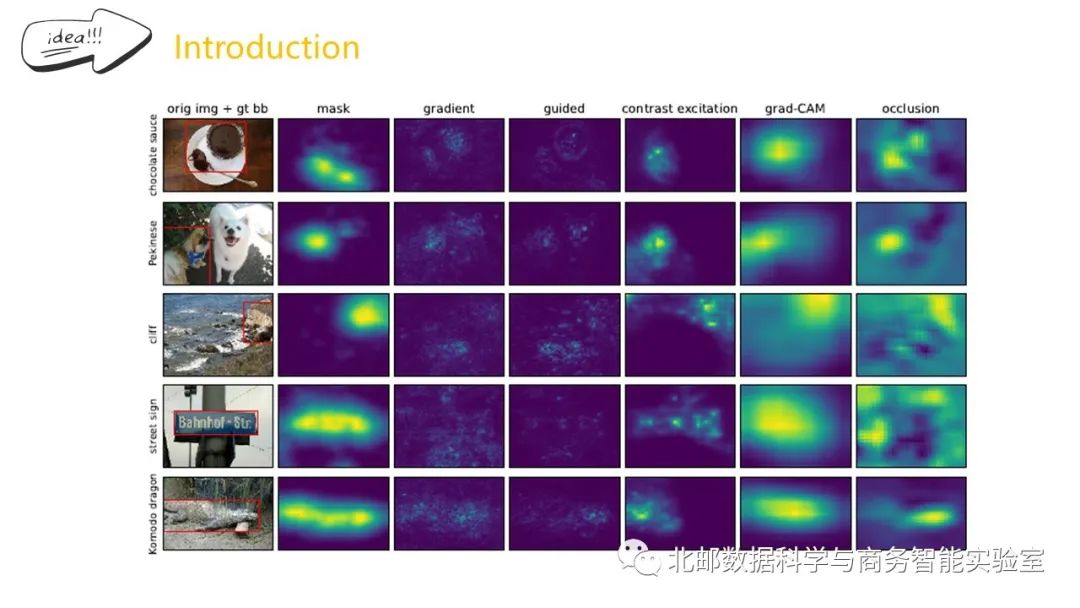

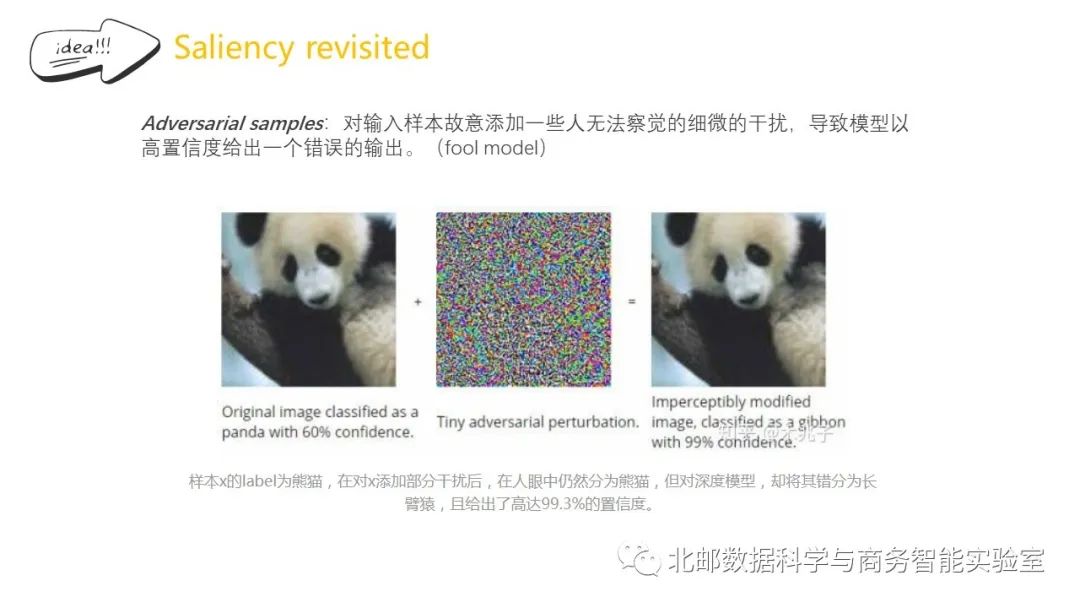

随着机器学习算法越来越多地应用于高影响但高风险的任务,如医疗诊断或自动驾驶,研究人员能够解释这些算法是如何得出他们的预测是至关重要的。近年来,出现了一些图像显著性方法,这些方法已经被开发用来总结高度复杂的神经网络在图像中“寻找”它们预测的证据的位置。然而,这些技术受到其启发式性质和体系结构约束的限制。

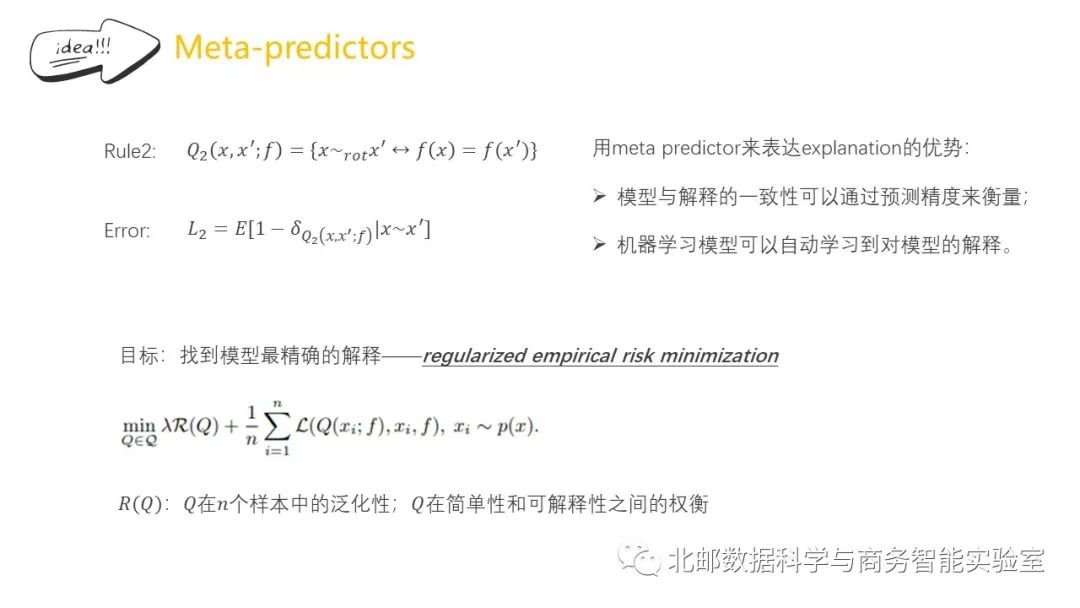

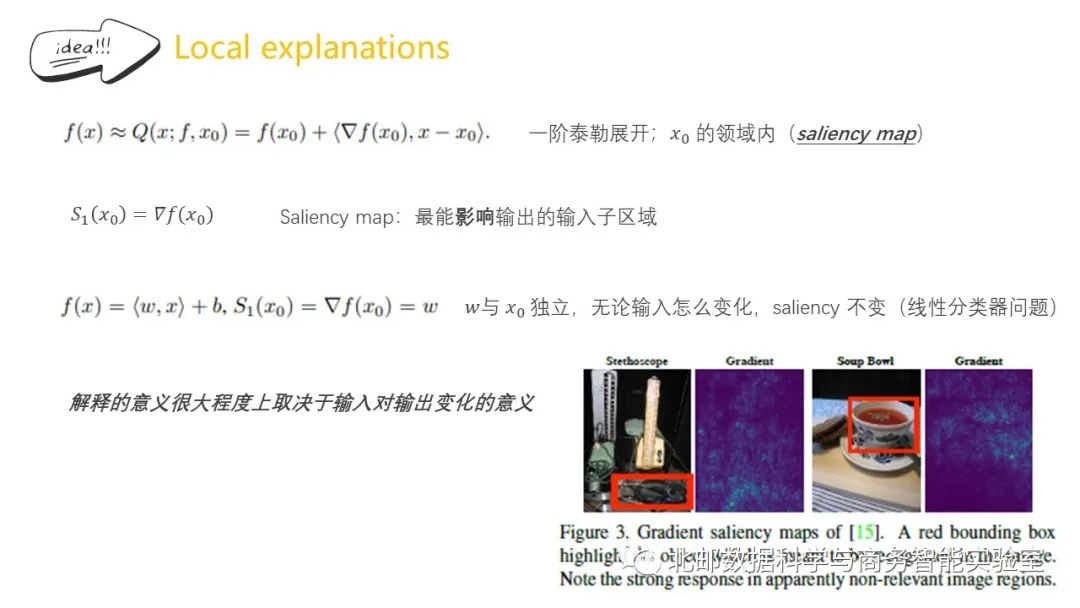

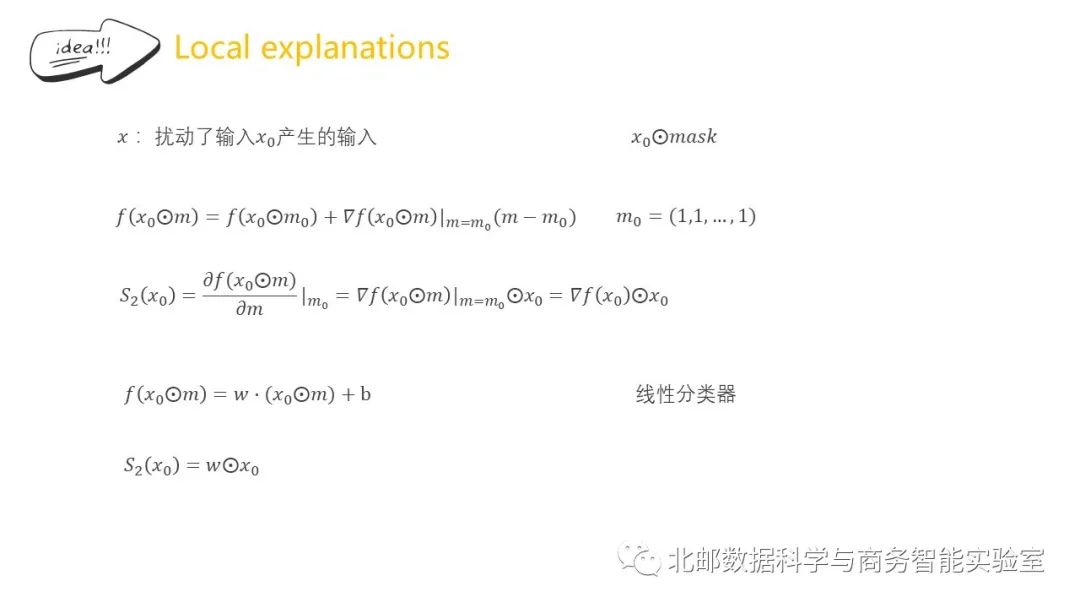

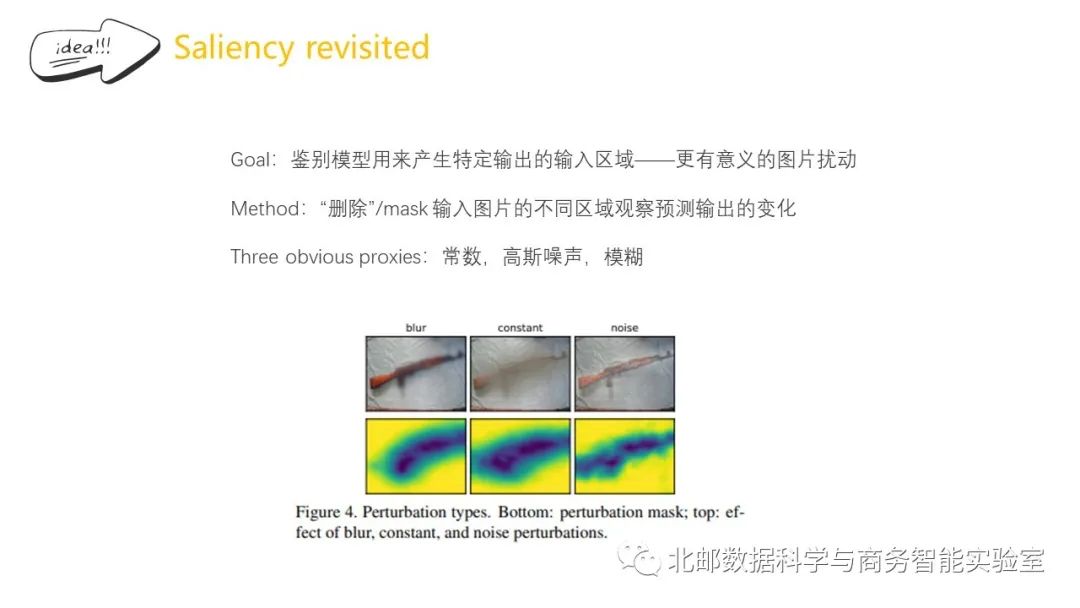

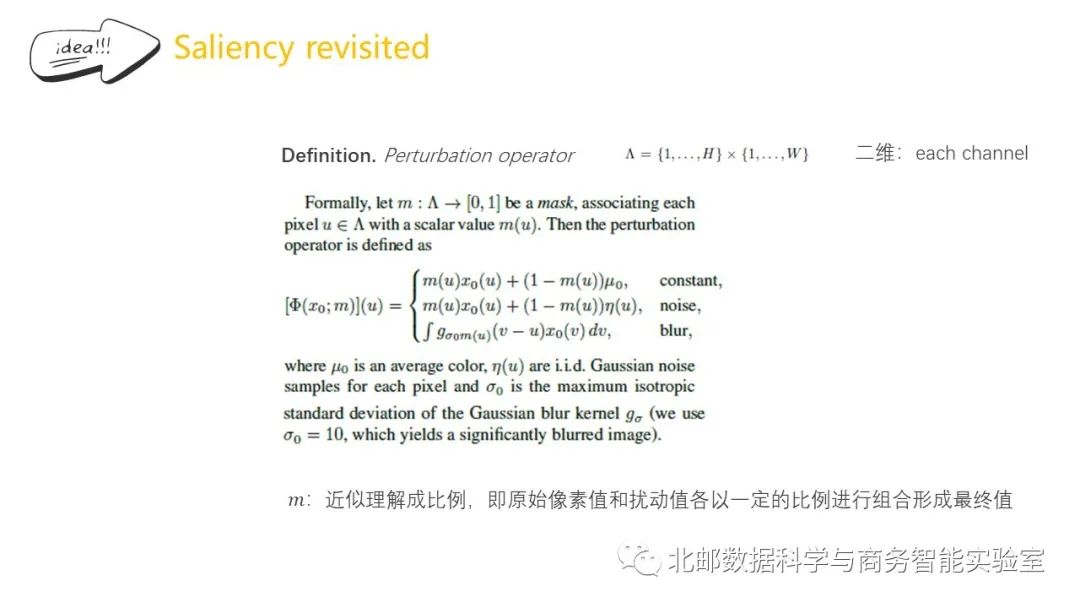

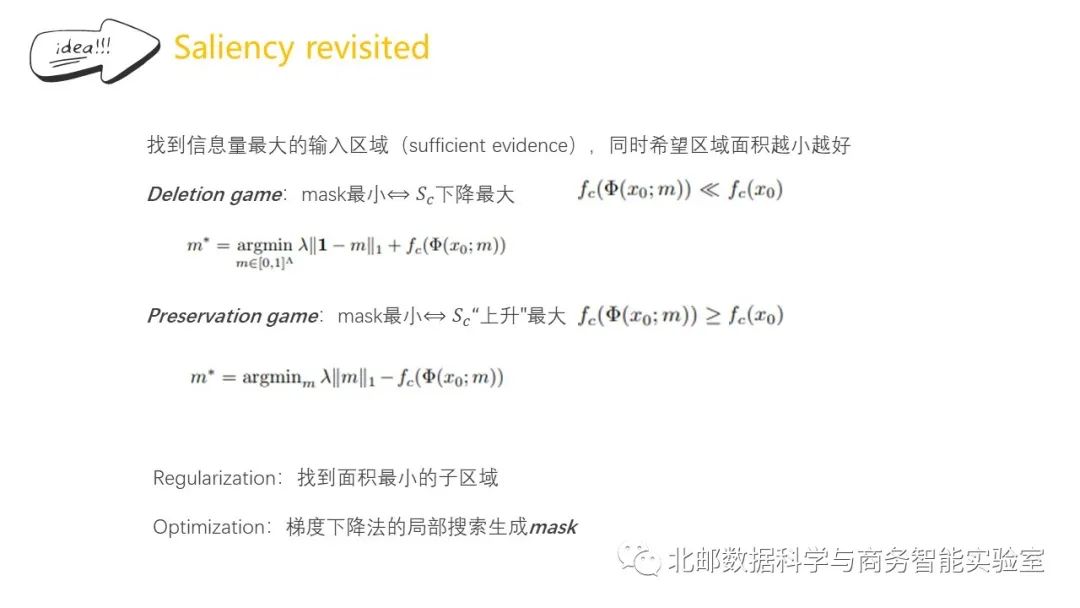

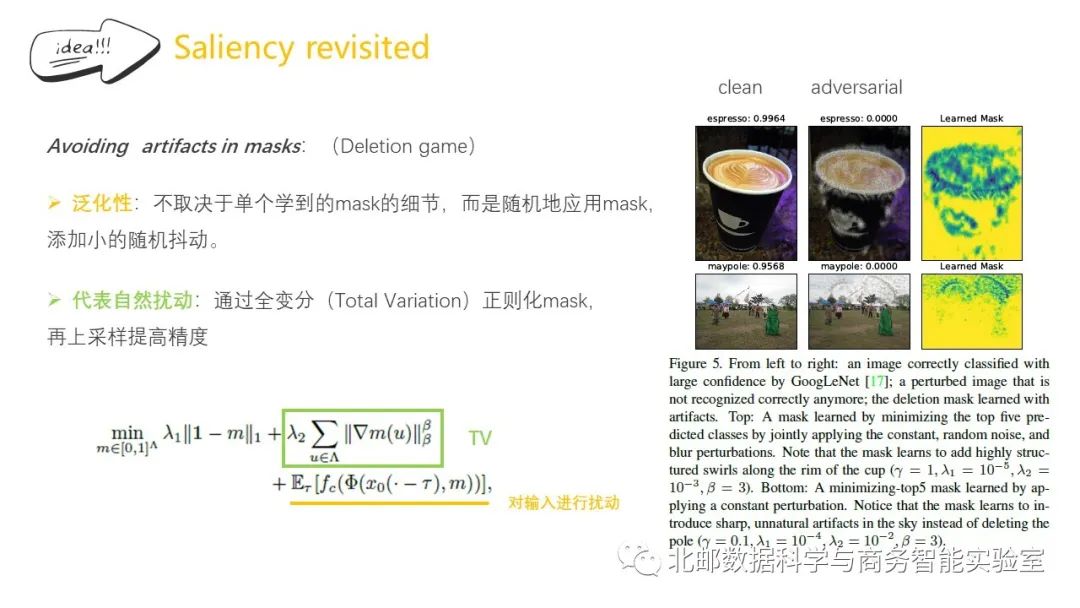

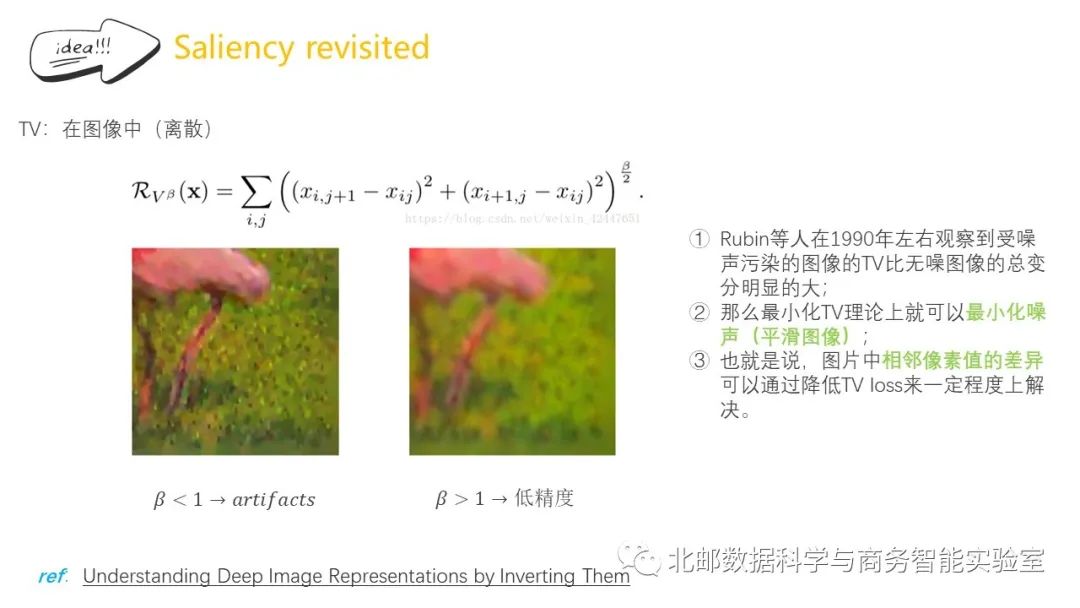

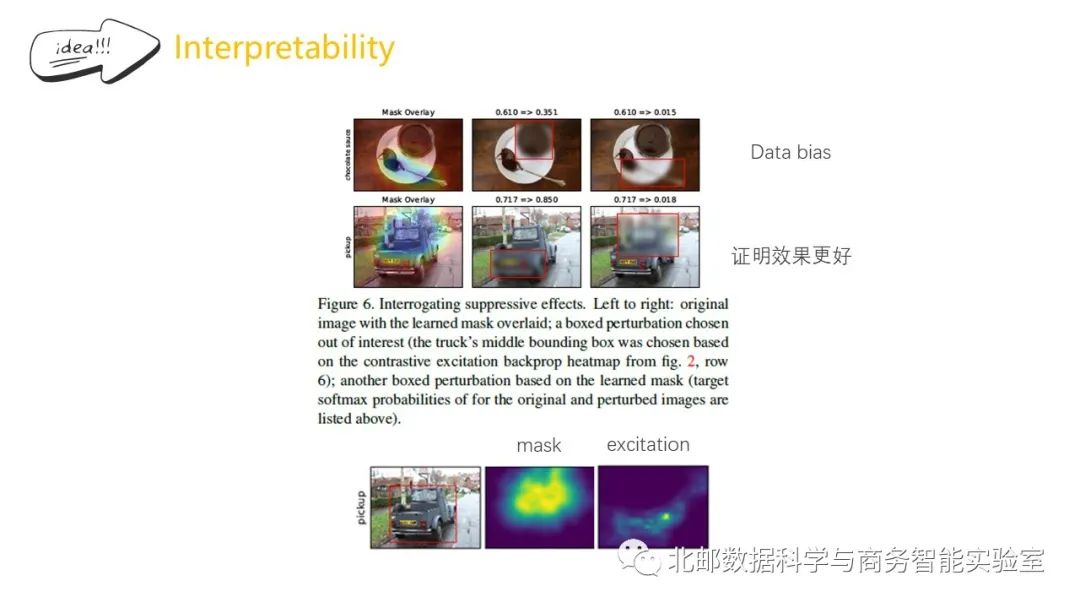

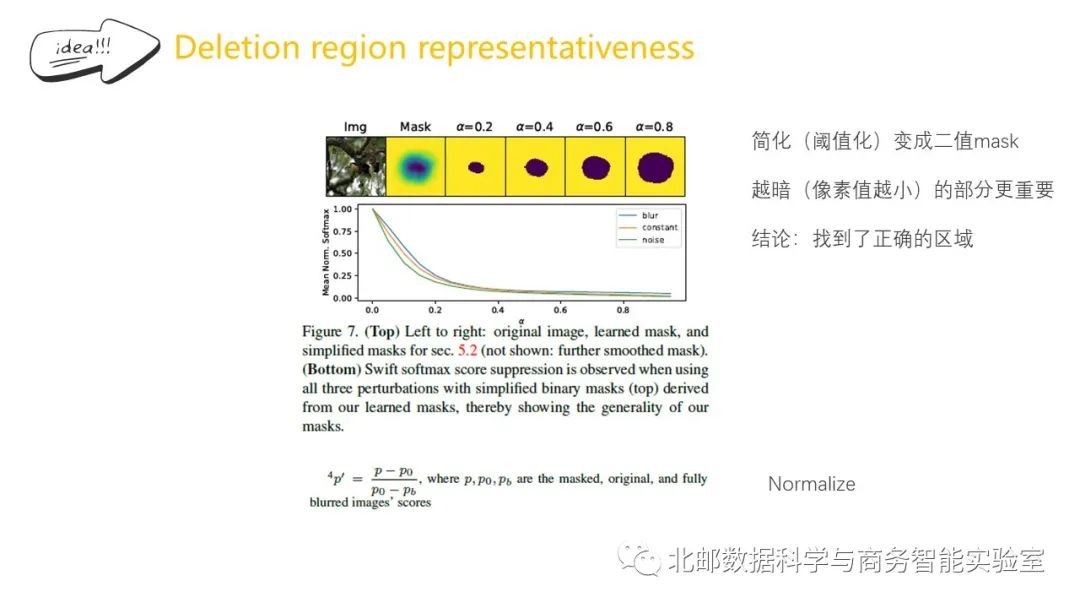

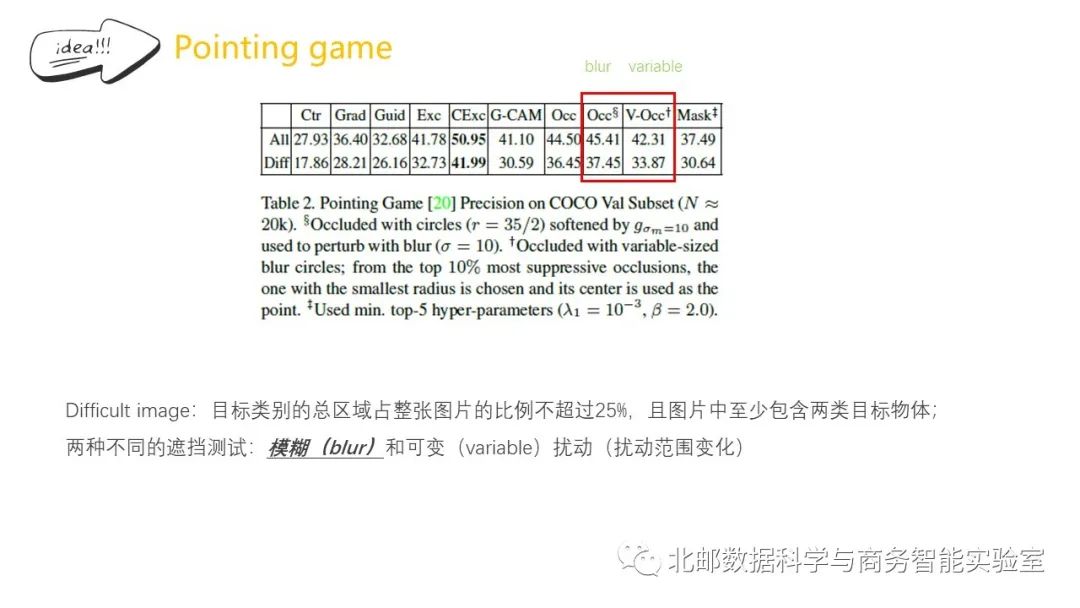

在本文中,我们主要做了两个贡献:第一,我们提出了一个通用的框架,用于学习任何黑箱算法的不同类型的解释。其次,我们对该框架进行特殊化,以找到图像中对分类器决策最重要的部分。与以前的工作不同,我们的方法是模型不可知的,并且是可测试的,因为它基于显式的和可解释的图像扰动。

英文摘要:

As machine learning algorithms areincreasingly applied to high impact yet high risk tasks, such as medicaldiagnosis or autonomous driving, it is critical that researchers can explainhow such algorithms arrived at their predictions. In recent years, a number ofimage saliency methods have been developed to summarize where highly complex neuralnetworks “look” in an image for evidence for their predictions. However, these techniquesare limited by their heuristic nature and architectural constraints.

In this paper, we make two maincontributions: First, we propose a general framework for learning different kindsof explanations for any black box algorithm. Second, we specialise theframework to find the part of an image most responsible for a classifier decision.Unlike previous works, our method is model-agnostic and testable because it is groundedin explicit and interpretable image perturbations.

文献总结:

点击“阅读原文”,了解论文详情!