Table of Contents

一.测试环境准备

测试环境:

Doris 4台虚拟机,4核8G 150GB普通磁盘。

Hive 4台虚拟机,4核8G 150GB普通磁盘。

Hive on Spark 同Hive

测试数据:

数据量7亿左右。

hive> desc ods_fact_sale_orc;

OK

id bigint

sale_date string

prod_name string

sale_nums int

Time taken: 0.202 seconds, Fetched: 4 row(s)

测试语句:

select * from ods_fact_sale_orc where id = 100;

select count(*) from ods_fact_sale_orc;

备注:

因为使用的是低配虚拟机,加上配置都是默认值,故测试结果只能作为一个参考,请知。

二. 测试结果

2.1 Hive

hive> select * from ods_fact_sale_orc where id = 100;

Query ID = root_20211208104736_0c2ab392-2f47-4b42-94fc-c2daf14a2f7d

Total jobs = 1

Launching Job 1 out of 1

Number of reduce tasks is set to 0 since there's no reduce operator

21/12/08 10:47:38 INFO client.ConfiguredRMFailoverProxyProvider: Failing over to rm69

Starting Job = job_1638236643110_0032, Tracking URL = http://hp3:8088/proxy/application_1638236643110_0032/

Kill Command = /opt/cloudera/parcels/CDH-6.3.1-1.cdh6.3.1.p0.1470567/lib/hadoop/bin/hadoop job -kill job_1638236643110_0032

Hadoop job information for Stage-1: number of mappers: 9; number of reducers: 0

2021-12-08 10:47:45,948 Stage-1 map = 0%, reduce = 0%

2021-12-08 10:47:55,366 Stage-1 map = 11%, reduce = 0%, Cumulative CPU 5.48 sec

2021-12-08 10:47:58,483 Stage-1 map = 56%, reduce = 0%, Cumulative CPU 44.13 sec

2021-12-08 10:48:05,773 Stage-1 map = 67%, reduce = 0%, Cumulative CPU 54.31 sec

2021-12-08 10:48:07,831 Stage-1 map = 78%, reduce = 0%, Cumulative CPU 63.71 sec

2021-12-08 10:48:08,867 Stage-1 map = 89%, reduce = 0%, Cumulative CPU 73.08 sec

2021-12-08 10:48:09,896 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 82.58 sec

MapReduce Total cumulative CPU time: 1 minutes 22 seconds 580 msec

Ended Job = job_1638236643110_0032

MapReduce Jobs Launched:

Stage-Stage-1: Map: 9 Cumulative CPU: 82.58 sec HDFS Read: 2150344344 HDFS Write: 830 HDFS EC Read: 0 SUCCESS

Total MapReduce CPU Time Spent: 1 minutes 22 seconds 580 msec

OK

100 2012-07-09 00:00:00.0 PROD9 38

Time taken: 34.411 seconds, Fetched: 1 row(s)

hive>

> select count(*) from ods_fact_sale_orc;

Query ID = root_20211208104925_0b4ece78-2735-4ebb-bab9-431aae074e11

Total jobs = 1

Launching Job 1 out of 1

Number of reduce tasks determined at compile time: 1

In order to change the average load for a reducer (in bytes):

set hive.exec.reducers.bytes.per.reducer=<number>

In order to limit the maximum number of reducers:

set hive.exec.reducers.max=<number>

In order to set a constant number of reducers:

set mapreduce.job.reduces=<number>

21/12/08 10:49:25 INFO client.ConfiguredRMFailoverProxyProvider: Failing over to rm69

Starting Job = job_1638236643110_0033, Tracking URL = http://hp3:8088/proxy/application_1638236643110_0033/

Kill Command = /opt/cloudera/parcels/CDH-6.3.1-1.cdh6.3.1.p0.1470567/lib/hadoop/bin/hadoop job -kill job_1638236643110_0033

Hadoop job information for Stage-1: number of mappers: 9; number of reducers: 1

2021-12-08 10:49:32,158 Stage-1 map = 0%, reduce = 0%

2021-12-08 10:49:39,433 Stage-1 map = 11%, reduce = 0%, Cumulative CPU 3.87 sec

2021-12-08 10:49:40,465 Stage-1 map = 22%, reduce = 0%, Cumulative CPU 9.17 sec

2021-12-08 10:49:41,497 Stage-1 map = 56%, reduce = 0%, Cumulative CPU 25.21 sec

2021-12-08 10:49:45,624 Stage-1 map = 67%, reduce = 0%, Cumulative CPU 30.72 sec

2021-12-08 10:49:46,653 Stage-1 map = 78%, reduce = 0%, Cumulative CPU 35.9 sec

2021-12-08 10:49:47,685 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 46.3 sec

2021-12-08 10:49:53,847 Stage-1 map = 100%, reduce = 100%, Cumulative CPU 48.87 sec

MapReduce Total cumulative CPU time: 48 seconds 870 msec

Ended Job = job_1638236643110_0033

MapReduce Jobs Launched:

Stage-Stage-1: Map: 9 Reduce: 1 Cumulative CPU: 48.87 sec HDFS Read: 1992485 HDFS Write: 109 HDFS EC Read: 0 SUCCESS

Total MapReduce CPU Time Spent: 48 seconds 870 msec

OK

767830000

Time taken: 29.842 seconds, Fetched: 1 row(s)

hive>

2.2 Hive on Spark

hive>

> set hive.execution.engine=spark;

hive> select count(*) from ods_fact_sale_orc;

Query ID = root_20211208105129_f4675518-560e-43e7-b4d7-c4db0a5d52c4

Total jobs = 1

Launching Job 1 out of 1

In order to change the average load for a reducer (in bytes):

set hive.exec.reducers.bytes.per.reducer=<number>

In order to limit the maximum number of reducers:

set hive.exec.reducers.max=<number>

In order to set a constant number of reducers:

set mapreduce.job.reduces=<number>

Running with YARN Application = application_1638236643110_0034

Kill Command = /opt/cloudera/parcels/CDH-6.3.1-1.cdh6.3.1.p0.1470567/lib/hadoop/bin/yarn application -kill application_1638236643110_0034

Hive on Spark Session Web UI URL: http://hp4:10421

Query Hive on Spark job[0] stages: [0, 1]

Spark job[0] status = RUNNING

--------------------------------------------------------------------------------------

STAGES ATTEMPT STATUS TOTAL COMPLETED RUNNING PENDING FAILED

--------------------------------------------------------------------------------------

Stage-0 ........ 0 FINISHED 9 9 0 0 0

Stage-1 ........ 0 FINISHED 1 1 0 0 0

--------------------------------------------------------------------------------------

STAGES: 02/02 [==========================>>] 100% ELAPSED TIME: 11.12 s

--------------------------------------------------------------------------------------

Spark job[0] finished successfully in 11.12 second(s)

Spark Job[0] Metrics: TaskDurationTime: 25265, ExecutorCpuTime: 15802, JvmGCTime: 469, BytesRead / RecordsRead: 1979283 / 749902, BytesReadEC: 0, ShuffleTotalBytesRead / ShuffleRecordsRead: 522 / 9, ShuffleBytesWritten / ShuffleRecordsWritten: 522 / 9

OK

767830000

Time taken: 30.556 seconds, Fetched: 1 row(s)

hive>

> select * from ods_fact_sale_orc where id = 100;

Query ID = root_20211208105212_f5968f86-89ab-4e44-b712-4a687a7bec7f

Total jobs = 1

Launching Job 1 out of 1

In order to change the average load for a reducer (in bytes):

set hive.exec.reducers.bytes.per.reducer=<number>

In order to limit the maximum number of reducers:

set hive.exec.reducers.max=<number>

In order to set a constant number of reducers:

set mapreduce.job.reduces=<number>

Running with YARN Application = application_1638236643110_0034

Kill Command = /opt/cloudera/parcels/CDH-6.3.1-1.cdh6.3.1.p0.1470567/lib/hadoop/bin/yarn application -kill application_1638236643110_0034

Hive on Spark Session Web UI URL: http://hp4:10421

Query Hive on Spark job[1] stages: [2]

Spark job[1] status = RUNNING

--------------------------------------------------------------------------------------

STAGES ATTEMPT STATUS TOTAL COMPLETED RUNNING PENDING FAILED

--------------------------------------------------------------------------------------

Stage-2 ........ 0 FINISHED 9 9 0 0 0

--------------------------------------------------------------------------------------

STAGES: 01/01 [==========================>>] 100% ELAPSED TIME: 15.08 s

--------------------------------------------------------------------------------------

Spark job[1] finished successfully in 15.08 second(s)

Spark Job[1] Metrics: TaskDurationTime: 49170, ExecutorCpuTime: 42275, JvmGCTime: 2493, BytesRead / RecordsRead: 2150340258 / 749902, BytesReadEC: 0, ShuffleTotalBytesRead / ShuffleRecordsRead: 0 / 0, ShuffleBytesWritten / ShuffleRecordsWritten: 0 / 0

OK

100 2012-07-09 00:00:00.0 PROD9 38

Time taken: 15.237 seconds, Fetched: 1 row(s)

hive>

2.3 Doris

mysql> select * from table3 where id = 100;

+------+-----------------------+-----------+-----------+

| id | sale_date | prod_name | sale_nums |

+------+-----------------------+-----------+-----------+

| 100 | 2012-07-09 00:00:00.0 | PROD9 | 38 |

+------+-----------------------+-----------+-----------+

1 row in set (0.03 sec)

mysql> select count(*) from table3;

+-----------+

| count(*) |

+-----------+

| 767830000 |

+-----------+

1 row in set (17.92 sec)

2.4 测试结果

| 产品 | 单行查询时间 | 查询总数时间 |

|---|---|---|

| Hive | 34秒 | 30秒 |

| Hive on Spark | 15秒 | 11秒 |

| Doris | 0.03秒 | 18秒 |

可以得出简单结论:

- Doris单行查询性能秒杀 Hive和Hive on Spark

- Doris查询总数性能比Hive on Spark稍慢

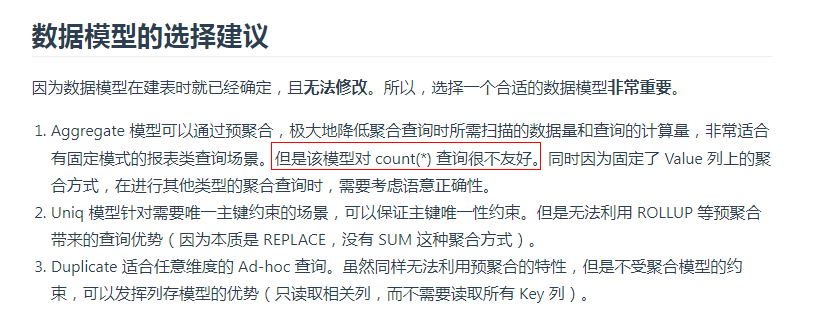

官网看到的,看来Aggregate模型的情况下count(*) 这个有点欺负Doris了。

后面的测试,有待更新…

「喜欢这篇文章,您的关注和赞赏是给作者最好的鼓励」

关注作者

【版权声明】本文为墨天轮用户原创内容,转载时必须标注文章的来源(墨天轮),文章链接,文章作者等基本信息,否则作者和墨天轮有权追究责任。如果您发现墨天轮中有涉嫌抄袭或者侵权的内容,欢迎发送邮件至:contact@modb.pro进行举报,并提供相关证据,一经查实,墨天轮将立刻删除相关内容。