传统的机器学习训练模型需要大量的标签数据,而且每一个模型是为了解决特定任务设计的,所以当面对全新领域问题就显得无能为力,因此采用迁移学习来解决不同领域之间知识迁移问题,能达到“举一反三”的作用,使学习性能显著提高。

VGG-16结构

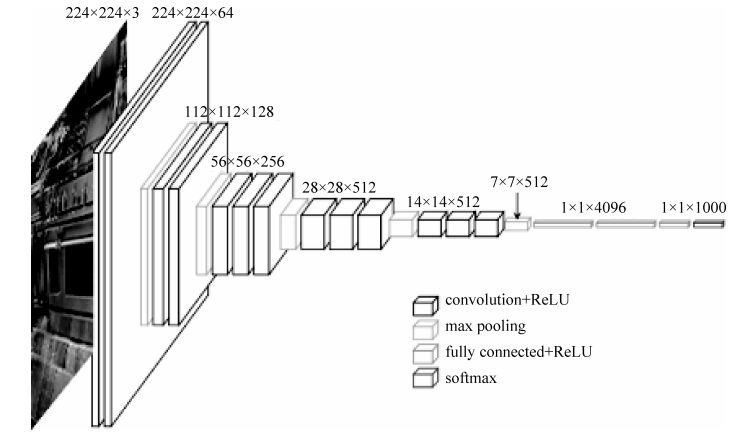

VGG-16共包括13个卷积层、3个全连接层、5个池化层,卷积层与全连接层具有权重系数,而池化层不涉及权重,因此这就是VGG-16的来源,如图6.36所示。

■ 图6.36VGG-16模型结构图

我们保留VGG-16的卷积层,修改全连接层,将其迁移到与图片分类不同的领域,实现动物体长的识别。

迁移学习过程

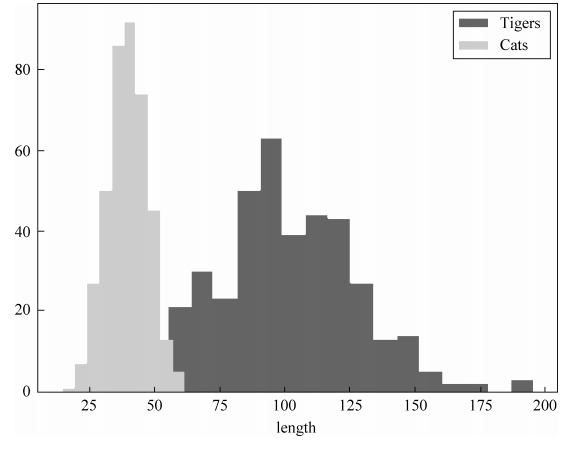

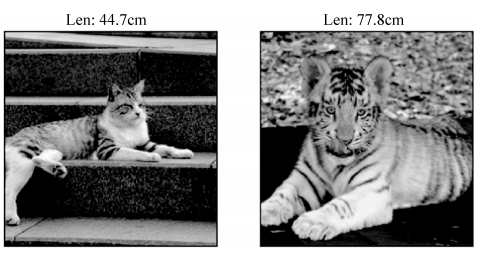

首先,获取想要进行训练的数据集,本实验采用1000个分类中的猫和老虎的数据。然后,自定义设置猫和老虎的体长参数,如图6.37所示。

■ 图6.37猫和虎的体长数据分布

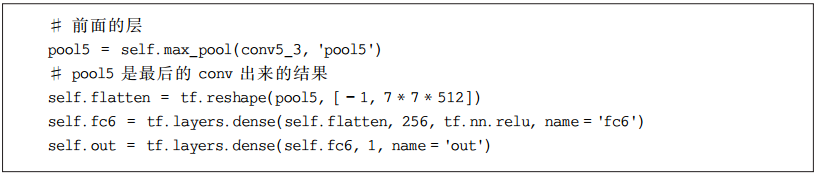

利用VGG-16训练好的model parameters,然后保留Convolution和pooling层,修改fullyconnected层,使其变为可以被训练的两层结构,最终输出数字代表猫和老虎的体长。

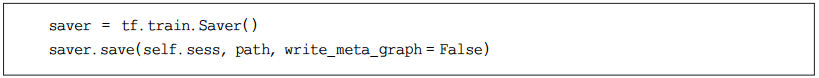

self.flatten之前的layers都是不能被训练的. 而 tf.layers.dense() 建立的layers是可以被训练的. 训练成功之后, 再定义一个Saver来保存由 tf.layers.dense()建立的parameters。

训练好后的VGG-16的Convolution相当于一个feature extractor,提取或压缩图片的特征,这些特征用作训练regressor,即softmax。

至此,迁移学习已经完成,进行测试。

迁移学习结果

通过传入两张分别为猫和虎的图片,应用迁移学习给出各自体长结果,如图6.38所示。

■ 图6.38迁移学习模型输出结果

实践示例程序参见附录E。

附录E VGG-16迁移学习

import os

import numpy as np

import tensorflow as tf

import skimage.io

import skimage.transform

import matplotlib.pyplot as plt

def load_img(path):

img = skimage.io.imread(path)

img = img 255.0

# print "Original Image Shape: ", img.shape

# we crop image from center

short_edge = min(img.shape[:2])

yy = int((img.shape[0] - short_edge) 2)

xx = int((img.shape[1] - short_edge) 2)

crop_img = img[yy: yy + short_edge, xx: xx + short_edge]

# resize to 224, 224

resized_img = skimage.transform.resize(crop_img, (224, 224))[None, :, :, :] # shape [1, 224, 224, 3]

return resized_img

def load_data():

imgs = {'tiger': [], 'kittycat': []}

for k in imgs.keys():

dir = './for_transfer_learning/data/' + k

for file in os.listdir(dir):

if not file.lower().endswith('.jpg'):

continue

try:

resized_img = load_img(os.path.join(dir, file))

except OSError:

continue

imgs[k].append(resized_img) # [1, height, width, depth] * n

if len(imgs[k]) == 400: # only use 400 imgs to reduce my memory load

break

# fake length data for tiger and cat

tigers_y = np.maximum(20, np.random.randn(len(imgs['tiger']), 1) * 30 + 100)

cat_y = np.maximum(10, np.random.randn(len(imgs['kittycat']), 1) * 8 + 40)

return imgs['tiger'], imgs['kittycat'], tigers_y, cat_y

class Vgg16:

vgg_mean = [103.939, 116.779, 123.68]

def __init__(self, vgg16_npy_path=None, restore_from=None):

# pre-trained parameters

try:

self.data_dict = np.load(vgg16_npy_path,allow_pickle=True, encoding='latin1').item()

except FileNotFoundError:

print('请下载')

self.tfx = tf.placeholder(tf.float32, [None, 224, 224, 3])

self.tfy = tf.placeholder(tf.float32, [None, 1])

# Convert RGB to BGR

red, green, blue = tf.split(axis=3, num_or_size_splits=3, value=self.tfx * 255.0)

bgr = tf.concat(axis=3, values=[

blue - self.vgg_mean[0],

green - self.vgg_mean[1],

red - self.vgg_mean[2],

])

# pre-trained VGG layers are fixed in fine-tune

conv1_1 = self.conv_layer(bgr, "conv1_1")

conv1_2 = self.conv_layer(conv1_1, "conv1_2")

pool1 = self.max_pool(conv1_2, 'pool1')

conv2_1 = self.conv_layer(pool1, "conv2_1")

conv2_2 = self.conv_layer(conv2_1, "conv2_2")

pool2 = self.max_pool(conv2_2, 'pool2')

conv3_1 = self.conv_layer(pool2, "conv3_1")

conv3_2 = self.conv_layer(conv3_1, "conv3_2")

conv3_3 = self.conv_layer(conv3_2, "conv3_3")

pool3 = self.max_pool(conv3_3, 'pool3')

conv4_1 = self.conv_layer(pool3, "conv4_1")

conv4_2 = self.conv_layer(conv4_1, "conv4_2")

conv4_3 = self.conv_layer(conv4_2, "conv4_3")

pool4 = self.max_pool(conv4_3, 'pool4')

conv5_1 = self.conv_layer(pool4, "conv5_1")

conv5_2 = self.conv_layer(conv5_1, "conv5_2")

conv5_3 = self.conv_layer(conv5_2, "conv5_3")

pool5 = self.max_pool(conv5_3, 'pool5')

# detach original VGG fc layers and

# reconstruct your own fc layers serve for your own purpose

self.flatten = tf.reshape(pool5, [-1, 7 * 7 * 512])

self.fc6 = tf.layers.dense(self.flatten, 256, tf.nn.relu, name='fc6')

self.out = tf.layers.dense(self.fc6, 1, name='out')

self.sess = tf.Session()

if restore_from:

saver = tf.train.Saver()

saver.restore(self.sess, restore_from)

else: # training graph

self.loss = tf.losses.mean_squared_error(labels=self.tfy, predictions=self.out)

self.train_op = tf.train.RMSPropOptimizer(0.001).minimize(self.loss)

self.sess.run(tf.global_variables_initializer())

def max_pool(self, bottom, name):

return tf.nn.max_pool(bottom, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME', name=name)

def conv_layer(self, bottom, name):

with tf.variable_scope(name): # CNN's filter is constant, NOT Variable that can be trained

conv = tf.nn.conv2d(bottom, self.data_dict[name][0], [1, 1, 1, 1], padding='SAME')

lout = tf.nn.relu(tf.nn.bias_add(conv, self.data_dict[name][1]))

return lout

def train(self, x, y):

loss, _ = self.sess.run([self.loss, self.train_op], {self.tfx: x, self.tfy: y})

return loss

def predict(self, paths):

fig, axs = plt.subplots(1, 2)

for i, path in enumerate(paths):

x = load_img(path)

length = self.sess.run(self.out, {self.tfx: x})

axs[i].imshow(x[0])

axs[i].set_title('Len: %.1f cm' % length)

axs[i].set_xticks(());

axs[i].set_yticks(())

plt.show()

def save(self, path='./for_transfer_learning/model/transfer_learn'):

saver = tf.train.Saver()

saver.save(self.sess, path, write_meta_graph=False)

def train():

tigers_x, cats_x, tigers_y, cats_y = load_data()

# plot fake length distribution

plt.hist(tigers_y, bins=20, label='Tigers')

plt.hist(cats_y, bins=10, label='Cats')

plt.legend()

plt.xlabel('length')

plt.show()

xs = np.concatenate(tigers_x + cats_x, axis=0)

ys = np.concatenate((tigers_y, cats_y), axis=0)

vgg = Vgg16(vgg16_npy_path='./for_transfer_learning/vgg16.npy')

print('Net built')

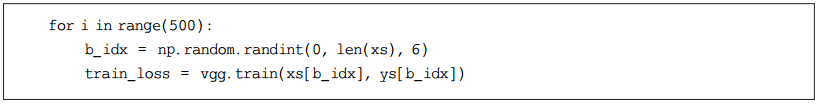

for i in range(100):

b_idx = np.random.randint(0, len(xs), 6)

train_loss = vgg.train(xs[b_idx], ys[b_idx])

print(i, 'train loss: ', train_loss)

vgg.save('./for_transfer_learning/model/transfer_learn') # save learned fc layers

def eval():

vgg = Vgg16(vgg16_npy_path='./for_transfer_learning/vgg16.npy',

restore_from='./for_transfer_learning/model/transfer_learn')

vgg.predict(

['./for_transfer_learning/data/kittycat/23066047.d6694f.jpg', './for_transfer_learning/data/tiger/37425296_58a9896259.jpg'])

if __name__ == '__main__':

# download()

#train()

eval()

实例讲解

人工智能

精彩回顾

参考书籍

《人工智能》

ISBN:9787302572541

尚文倩 编著

定价:59.8元

扫码微店优惠购书

内容简介

本书系统介绍了人工智能的基本原理、基本技术、基本方法和应用领域等内容,比较全面地反映了60多年来人工智能领域的进展,并根据人工智能的发展动向对一些传统内容做了取舍。全书共9章。第1章介绍人工智能的基本概念、发展历史、应用领域等。其后8章的内容分为两大部分:第一部分(第2~5章)主要讲述传统人工智能的基本概念、原理、方法和技术,涵盖知识表示、搜索策略、确定性推理和不确定推理的相关技术与方法;第二部分(第6~9章)主要讲述现代人工智能的新技术和方法,涵盖机器学习、数据挖掘、大数据、深度学习的**技术与方法。本书提供了8个实践项目案例,并且每章后面附有习题,以供读者练习。本书主要作为计算机专业和其他相关学科相关课程教材,也可供有关科技人员参考。

精彩推荐

微信小程序游戏开发│猜数字小游戏(附源码+视频) Flink编程基础│Scala编程初级实践 Flink编程基础│FlinkCEP编程实践 Flink编程基础│DataStream API编程实践 Flink编程基础│DataSet API编程实践 数据分析实战│客户价值分析 数据分析实战│价格预测挑战 数据分析实战│时间序列预测 数据分析实战│KaggleTitanic生存预测